AI Virtual Companions for Mental Wellness: Benefits, Risks, and Healthy Usage

Explore how AI virtual companions can support mental wellness and combat loneliness. Understanding benefits, limitations, and healthy approaches to AI companionship.

Loneliness affects millions of people worldwide, with rising rates particularly among younger generations despite unprecedented connectivity. AI companions have emerged as one response to this crisis, offering always-available interaction that some users find genuinely helpful for their mental wellbeing.

This topic deserves careful examination. AI companions aren't therapy replacements, but dismissing their potential benefits ignores real experiences of users who find value in them. Understanding both benefits and limitations helps users make informed choices.

Quick Answer: AI companions can provide supplementary emotional support, practice for social interaction, and consistent availability during difficult moments. They work best as complements to, not replacements for, human connection and professional mental health support. Users should maintain awareness of limitations while appreciating genuine benefits for appropriate use cases.

:::tip[Key Takeaways]

- AI Virtual Companions for Mental Wellness: Benefits, Risks, and Healthy Usage represents an important development in its field

- Multiple approaches exist depending on your goals

- Staying informed helps you make better decisions

- Hands-on experience is the best way to learn :::

- How AI companions support mental wellness

- Research on AI companionship effects

- Healthy vs unhealthy usage patterns

- When professional help is needed

- Best practices for beneficial use

The Loneliness Epidemic

Before examining AI solutions, understanding the problem provides context. Loneliness has reached what many health experts call epidemic levels.

Scale of the Problem

Research consistently shows:

Prevalence: Significant portions of adults report feeling lonely regularly.

Demographics: Young adults often report higher loneliness than elderly populations.

Health impacts: Loneliness correlates with increased mortality risk comparable to smoking.

Social trends: Social isolation has increased despite digital connectivity.

Why Traditional Solutions Fall Short

Standard advice for loneliness often fails in practice. "Join clubs" and "make friends" assumes social access and skills that lonely individuals may lack. Work schedules, geographic isolation, social anxiety, and other factors create barriers that simple advice doesn't address.

AI companions don't solve these underlying issues, but they provide an accessible option that requires no external coordination.

How AI Companions Help

Understanding specific mechanisms helps evaluate AI companions realistically.

Always-Available Support

AI companions provide:

24/7 availability: No waiting for friends' schedules.

Consistent responses: No bad days or distraction from their own problems.

No judgment: Users share freely without social consequences.

Immediate access: Help during 3 AM anxiety or holiday isolation.

For someone experiencing a difficult moment with no one to talk to, having any option matters.

AI companions provide consistent availability during difficult moments

AI companions provide consistent availability during difficult moments

Social Practice

Some users report AI companions help them practice:

Conversation skills: Low-stakes practice before real interactions.

Emotional expression: Learning to articulate feelings.

Relationship dynamics: Understanding healthy interaction patterns.

Confidence building: Positive interactions build social confidence.

These practice benefits transfer to real-world relationships for some users.

Journaling Alternative

AI companions function as interactive journals:

Processing thoughts: Verbalizing helps clarify feelings.

Tracking patterns: Regular conversation reveals emotional trends.

Venting safely: Expressing frustration without affecting relationships.

Recording experiences: AI memories create reflection opportunities.

The interactive element may engage users who find traditional journaling difficult.

Bridging Gaps

AI companions fill specific gaps:

Geographic isolation: Rural or relocated individuals lacking local connections.

Social anxiety: Preparation for human interaction.

Busy schedules: Companionship when life limits social time.

Recovery periods: Support during illness or other isolation.

These uses treat AI as supplement rather than replacement.

Free ComfyUI Workflows

Find free, open-source ComfyUI workflows for techniques in this article. Open source is strong.

Research Perspective

What Studies Show

Research on AI companionship remains limited but growing:

User reports: Many users report improved mood and reduced loneliness after AI companion use.

Engagement: High engagement rates suggest users find value.

Concerns: Some studies note risks of substitution for human connection.

Mechanisms: Limited understanding of exactly how benefits occur.

Research lags the rapidly evolving technology, creating uncertainty about long-term effects.

Limitations of Current Research

Important caveats:

Self-selection: Users who benefit may differ from those who don't.

Short-term focus: Most studies examine brief periods.

Metric challenges: Defining and measuring "wellness improvement" proves difficult.

Rapid changes: Products change faster than research cycles.

Current evidence supports cautious optimism, not definitive conclusions.

Healthy Usage Patterns

Supplement, Not Substitute

The core principle for healthy use:

Do: Use AI for support between human interactions.

Do: Practice skills to apply with real people.

Do: Maintain investment in human relationships.

Don't: Withdraw from human relationships for AI preference.

Want to skip the complexity? Apatero gives you professional AI results instantly with no technical setup required.

Don't: Choose AI interaction when human connection is available.

Don't: Use AI to avoid working on real relationship challenges.

Time Boundaries

Practical limits help maintain balance:

Set usage limits: Decide maximum daily interaction time.

Track patterns: Notice if usage increases during stress.

Prioritize real connection: Real social opportunities take precedence.

Regular breaks: Periodic days without AI interaction.

Awareness Maintenance

Stay conscious of the nature of AI interaction:

Remember limitations: AI doesn't truly understand or care.

Notice emotional dependence: Anxiety about AI access signals concern.

Reality check: Ask if AI is replacing or supplementing human connection.

Seek feedback: Trusted friends can provide outside perspective.

Warning Signs

When Usage Becomes Problematic

Watch for these patterns:

Substitution: Choosing AI over available human connection.

Isolation increase: Reducing real-world social investment.

Emotional dependence: Distress when AI unavailable.

Earn Up To $1,250+/Month Creating Content

Join our exclusive creator affiliate program. Get paid per viral video based on performance. Create content in your style with full creative freedom.

Preference shift: Finding human interaction less satisfying.

Functional impact: AI use interfering with work or responsibilities.

Any of these warrant reassessment of usage patterns.

Underlying Issues

AI companion problems may signal deeper needs:

Social anxiety: AI avoidance of anxiety requires different treatment.

Depression: Isolation preference may indicate clinical depression.

Relationship trauma: Using AI to avoid relationship risks.

Grief: Using AI to replace lost relationships.

Professional help addresses underlying causes more effectively than AI companions.

When to Seek Professional Help

AI Companions Are Not Therapy

Clear distinctions matter:

AI companions cannot:

- Diagnose conditions

- Provide treatment

- Handle crisis situations

- Replace professional expertise

- Take responsibility for your care

Therapists can:

- Assess your specific situation

- Develop personalized treatment

- Intervene in crisis

- Provide accountable care

- Connect to additional resources

Seek Help If

Professional consultation is appropriate when:

- Loneliness feels overwhelming despite efforts

- You experience persistent depression or anxiety

- AI companion use feels compulsive

- Real relationships continue deteriorating

- You have thoughts of self-harm

- Daily functioning is significantly impaired

These situations require human professional attention, not AI companions.

Specific Use Cases

Social Anxiety

For socially anxious individuals:

Benefits:

- Practice conversations without stakes

- Build confidence through positive interactions

- Prepare for specific social situations

Risks:

- Avoiding exposure that builds real tolerance

- Preference for "safe" AI interaction

- Not addressing underlying anxiety

Recommendation: Use alongside exposure therapy, not as replacement.

Grief and Loss

After losing relationships to death or breakup:

Benefits:

- Companionship during acute loss periods

- Processing feelings through conversation

- Consistent support during unpredictable grief

Risks:

- Delaying grief processing

- Creating substitute relationships

- Avoiding feelings through distraction

Recommendation: Time-limited use during acute phases, with plan for transition.

Geographic Isolation

For those physically isolated:

Benefits:

- Daily conversation despite no local options

- Maintaining verbal social skills

- Reducing isolation health effects

Risks:

- Reducing motivation to build real connections

- Accepting isolation rather than addressing it

Recommendation: Combine with efforts to build distance relationships and improve situation.

Best Practices Summary

Do

- Use as supplement to human connection

- Maintain awareness of AI limitations

- Set and follow usage limits

- Continue investing in real relationships

- Seek professional help when needed

- Take breaks regularly

Don't

- Replace human relationships with AI

- Use AI to avoid uncomfortable growth

- Develop emotional dependence

- Ignore warning signs of problematic use

- Expect AI to solve underlying issues

- Use during active mental health crisis

Frequently Asked Questions

Can AI companions really help with loneliness?

Many users report feeling less lonely with regular AI companion use. The effect is real for them, though mechanisms aren't fully understood.

Are AI companions a form of therapy?

No. They're not licensed, trained, or appropriate for mental health treatment. They may have therapeutic effects for some users, but aren't therapy.

Is it weird to talk to AI for emotional support?

The stigma is fading as more people find value in AI interaction. What matters is whether it helps you without causing harm.

How do I know if I'm using AI companions unhealthy?

Warning signs: choosing AI over human options, increasing isolation, distress when AI unavailable, declining real relationships.

Should I tell my therapist I use AI companions?

Yes, if you see a therapist. It's relevant information for understanding your support system and potential concerns.

Will AI companions get better at emotional support?

Likely yes. AI emotional intelligence continues improving, though fundamental limitations of not being conscious persist.

Can AI companions help with serious depression?

They shouldn't be primary treatment for clinical depression. May provide some support alongside professional treatment.

How long should I use an AI companion?

No universal answer. Regular assessment of whether it's helping without problematic patterns matters more than specific duration.

Conclusion

AI companions occupy a novel space in mental wellness support. They're not therapy replacements, but they're also not meaningless. For appropriate use cases with healthy boundaries, they provide real value to many users.

The key is honest self-assessment: Is AI companionship supplementing your social needs, or substituting for human connection you could have? Is usage stable and intentional, or compulsive and increasing? Do you maintain perspective on AI limitations, or do emotional dependencies form?

Used thoughtfully, AI companions can be one tool among many for managing loneliness and supporting mental wellness. Used without awareness, they risk becoming another form of escapism that delays rather than supports genuine growth.

For exploring AI companion options, see our AI girlfriend apps guide. For understanding the emotional capabilities of these systems, check our AI emotional intelligence guide.

Ready to Create Your AI Influencer?

Join 115 students mastering ComfyUI and AI influencer marketing in our complete 51-lesson course.

Related Articles

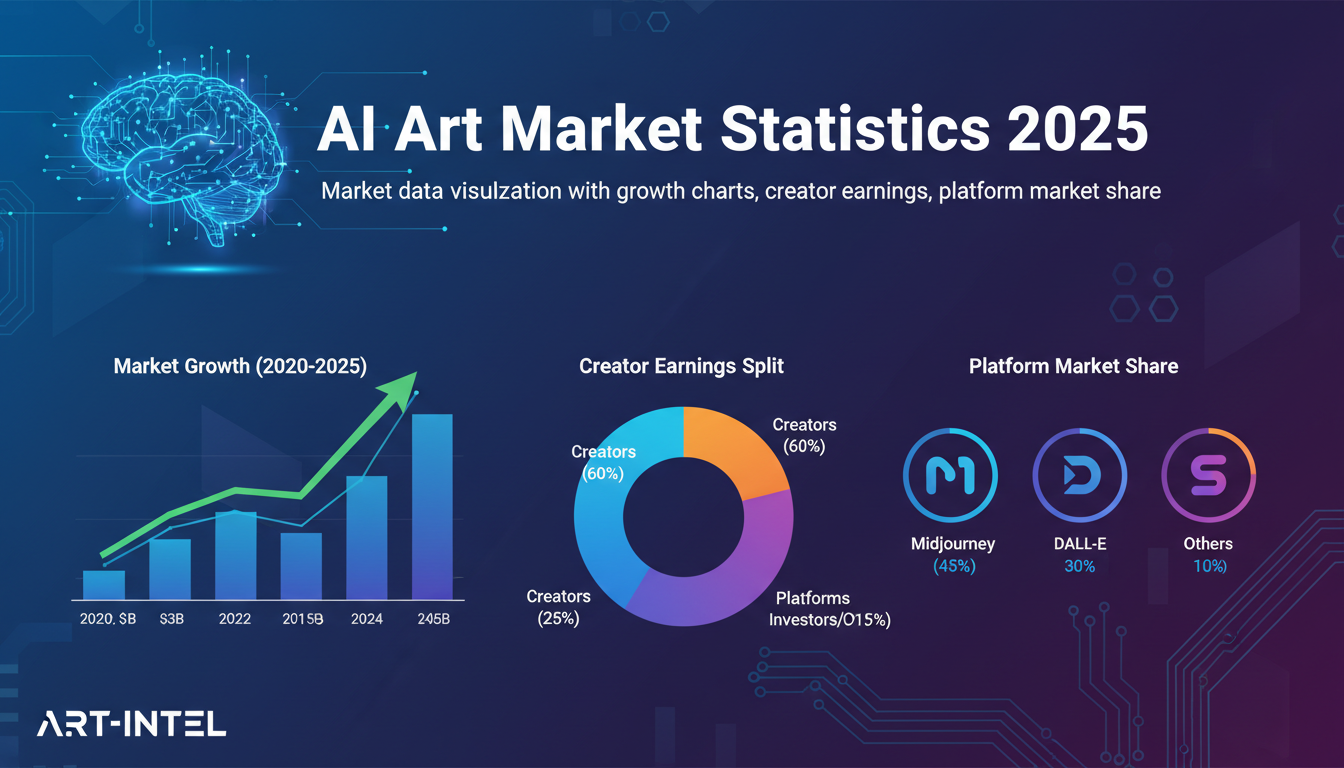

AI Art Market Statistics 2025: Industry Size, Trends, and Growth Projections

Comprehensive AI art market statistics including market size, creator earnings, platform data, and growth projections with 75+ data points.

AI Automation Tools: Transform Your Business Workflows in 2025

Discover the best AI automation tools to transform your business workflows. Learn how to automate repetitive tasks, improve efficiency, and scale operations with AI.

AI Avatar Generator: I Tested 15 Tools for Profile Pictures, Gaming, and Social Media in 2026

Comprehensive review of the best AI avatar generators in 2026. I tested 15 tools for profile pictures, 3D avatars, cartoon styles, gaming characters, and professional use cases.