AI Image Generation Without the Waiting Queue in 2025

Tired of waiting in AI generation queues? Find platforms with instant generation, no delays, and reliable performance.

Position 847 in queue. Estimated wait: 12 minutes. You just want to generate one image. Instead, you're watching a number slowly count down while your creative momentum evaporates.

Queues happen when platforms can't keep up with demand. You don't have to accept this. Better options exist.

Quick Answer: Platforms like Apatero, Leonardo AI, and SeaArt maintain enough infrastructure to avoid significant queuing. Local Stable Diffusion eliminates queues entirely—your GPU, your priority. Pay-per-use platforms often have better capacity than overloaded free tiers.

:::tip[Key Takeaways]

- Key options include Use pay-per-use platforms and Go local

- Multiple approaches exist depending on your goals

- Staying informed helps you make better decisions

- Hands-on experience is the best way to learn :::

- Apatero - Consistent fast generation

- Leonardo AI - Priority for paid users

- Local SD - No queue possible, instant access

- Off-peak timing - Generate when others don't

Why Queues Happen

Understanding the cause helps you avoid it:

Insufficient Infrastructure

Platforms underinvest in GPUs relative to user growth:

- GPUs are expensive

- Demand is unpredictable

- Overprovisioning cuts margins

Free Tier Abuse

Free users generate heavily without revenue:

- High demand, low payment

- Resources stretched thin

- Paying users subsidize free

Peak Time Congestion

Usage spikes at predictable times:

- Evening hours in major markets

- Weekends

- After viral content mentions platform

Viral Moments

Sudden attention creates surges:

- YouTube tutorials go viral

- Reddit posts blow up

- News coverage drives traffic

Platforms With Minimal Queuing

Apatero

Apatero maintains consistent fast generation through pay-per-use pricing.

Why it's fast:

- Revenue-per-generation funds infrastructure

- No free tier abuse

- Scaled to actual paying demand

- Video generation also available

Typical speed: 5-15 seconds per image

Leonardo AI

Leonardo prioritizes paid subscribers:

- Apprentice+ plans get priority

- Free tier may queue during peaks

- Generally good infrastructure

Typical speed: 5-20 seconds, faster on paid

Free ComfyUI Workflows

Find free, open-source ComfyUI workflows for techniques in this article. Open source is strong.

SeaArt

SeaArt maintains reasonable capacity:

- 200 free credits limits free tier abuse

- Premium users prioritized

- Generally acceptable speeds

Typical speed: 10-20 seconds

Local Stable Diffusion

Your hardware, your queue:

- Zero queue ever

- Speed depends on your GPU

- No waiting for anyone else

Typical speed: 5-30 seconds depending on hardware

Strategies to Avoid Queues

1. Generate Off-Peak

Queues are worst during:

- 6 PM - 11 PM US Eastern

- Weekends globally

- Immediately after platform updates/announcements

Better times:

- Early morning (any timezone)

- Weekday afternoons

- Late night

2. Use Paid Tiers

Paid users typically get priority:

Want to skip the complexity? Apatero gives you professional AI results instantly with no technical setup required.

- Faster queue access

- Reserved capacity

- Better GPUs sometimes

Even cheap tiers ($5-10/month) often eliminate queuing.

3. Diversify Platforms

When one platform queues, use another:

- Keep accounts on multiple platforms

- Same prompts work across most

- Switch based on current conditions

4. Cache Your Generations

Reduce total generations needed:

- Save successful prompts

- Download and organize outputs

- Don't regenerate unnecessarily

5. Batch Strategically

If queues are unavoidable:

- Queue multiple generations at once

- Do other work while waiting

- Maximize value of queue time

Speed Comparison

| Platform | Typical Speed | Queue Risk | Priority Option |

|---|---|---|---|

| Midjourney | 30-60s | Moderate | Subscription tier |

| DALL-E | 10-30s | Low | N/A |

| Apatero | 5-15s | Low | Pay-per-use |

| Leonardo AI | 5-20s | Low-Moderate | Paid tiers |

| SeaArt | 10-20s | Moderate | Premium |

| Perchance | 10-30s | Variable | N/A |

| Local SD | 5-30s | None | N/A |

The Local Solution

Running Stable Diffusion locally eliminates queues permanently:

Advantages

- Zero queue ever

- Speed limited only by your hardware

- Generate during any platform outage

- Complete independence

Requirements

- NVIDIA GPU 8GB+ VRAM

- Some technical knowledge

- ~$300-1000 for capable GPU

Expected Performance

- RTX 3060: ~15-25 seconds per image

- RTX 3080: ~8-15 seconds per image

- RTX 4090: ~3-8 seconds per image

Worth the investment if you generate regularly and value time.

Earn Up To $1,250+/Month Creating Content

Join our exclusive creator affiliate program. Get paid per viral video based on performance. Create content in your style with full creative freedom.

Hybrid Approach

Many users combine methods:

For daily work: Local SD (no queue, already have GPU) For video: Apatero (fast, no queue, video capability) For specific models: Cloud platforms as needed For mobile: Cloud platforms from any device

Queue Time Calculator

Before committing to a queued generation:

Queue position × average generation time = your wait

If queue is 50 deep at 30s average = 25 minute wait

Ask yourself: Is this prompt worth 25 minutes? Or should you:

- Use a different platform

- Wait for off-peak

- Use local SD instead

Frequently Asked Questions

Why do I queue when I'm paying?

Some paid tiers share infrastructure with free. Higher tiers usually have dedicated priority.

Does generation quality affect speed?

Yes. Higher resolution and more complex features take longer regardless of queue.

Can I skip the queue somehow?

Only through priority tiers or using less-congested platforms.

Is local SD really queue-free?

Yes. Your GPU, your generations. No external dependencies.

Which platform is fastest overall?

Local SD with good hardware, then Apatero among cloud options.

Do queues indicate a bad platform?

Not necessarily, but consistent long queues suggest infrastructure underinvestment.

The Bottom Line

Queues waste your time and kill creative momentum. Solutions:

- Use pay-per-use platforms like Apatero that scale with demand

- Go local with Stable Diffusion for queue-free generation

- Pay for priority on subscription platforms

- Generate off-peak when you must use congested platforms

- Diversify across multiple platforms

Your time matters. Don't spend it watching queue counters.

Ready to Create Your AI Influencer?

Join 115 students mastering ComfyUI and AI influencer marketing in our complete 51-lesson course.

Related Articles

Adobe Firefly vs Midjourney vs Ideogram 2026: Which Wins

Brand-safe licensing, scroll-stopping aesthetics, or text rendering. Three tools optimized for three different jobs, tested against real briefs.

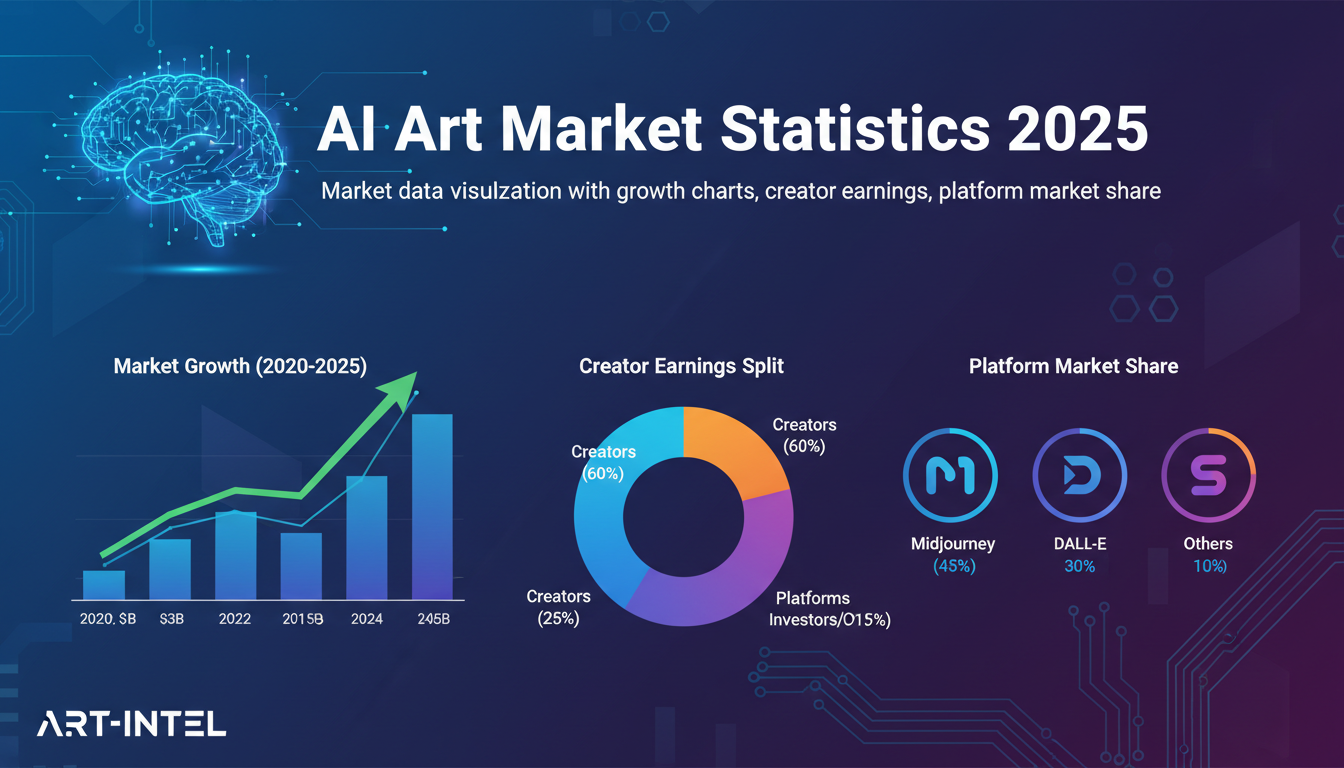

AI Art Market Statistics 2025: Industry Size, Trends, and Growth Projections

Comprehensive AI art market statistics including market size, creator earnings, platform data, and growth projections with 75+ data points.

AI Automation Tools: Transform Your Business Workflows in 2025

Discover the best AI automation tools to transform your business workflows. Learn how to automate repetitive tasks, improve efficiency, and scale operations with AI.