WanGP WebUI: AI Video Generation for Low-VRAM GPUs Complete Guide

Master WanGP (Wan2GP), the open-source video generator optimized for budget GPUs. Generate Wan 2.2 videos with just 6GB VRAM.

I've been running AI video generation on a GTX 1660 Super. Six gigs of VRAM. Everyone told me it was impossible. They were wrong. WanGP made it work, and now I'm generating Wan 2.2 videos that would have required a 4090 just months ago.

Quick Answer: WanGP (Wan2GP) is an optimized video generation interface that runs Wan 2.1/2.2, Hunyuan Video, LTX Video, and Flux on low-VRAM GPUs. With aggressive RAM offloading, you can generate 720p@24fps videos with as little as 6GB VRAM.

- WanGP supports Wan 2.1, Wan 2.2, Hunyuan Video, LTX Video, and Flux

- Minimum 6GB VRAM for basic generation (Wan 2.2 Ovi)

- Web-based interface, no complex scripting needed

- RAM offloading reduces VRAM requirements by 5GB+

- MMAudio integration adds automatic soundtracks

What Is WanGP and Why Does It Matter?

WanGP, created by DeepBeepMeep, is described as "The best Open Source Video Generative Models Accessible to the GPU Poor." That's not marketing fluff. It's accurate.

The AI video generation space has been gatekept by hardware requirements. Wan 2.2 officially needs 24GB+ VRAM. Most people don't have that. WanGP breaks down that barrier through aggressive optimization.

I tested it on three different systems:

- GTX 1660 Super 6GB: Works, slow but functional

- RTX 3060 12GB: Comfortable, good speeds

- RTX 4080 16GB: Fast, excellent quality

All three produced usable video output. That's remarkable.

Installation and Setup

The setup process is straightforward:

Step 1: Clone the Repository

git clone https://github.com/deepbeepmeep/Wan2GP.git

cd Wan2GP

Step 2: Install Dependencies

pip install -r requirements.txt

Step 3: Run the WebUI

python app.py

The first run downloads models automatically. Expect about 15-20GB of downloads depending on which models you want.

Step 4: Access the Interface

Open your browser to http://localhost:7860 (or the displayed port).

That's it. No CUDA configuration nightmares, no environment variable wrestling. It just works.

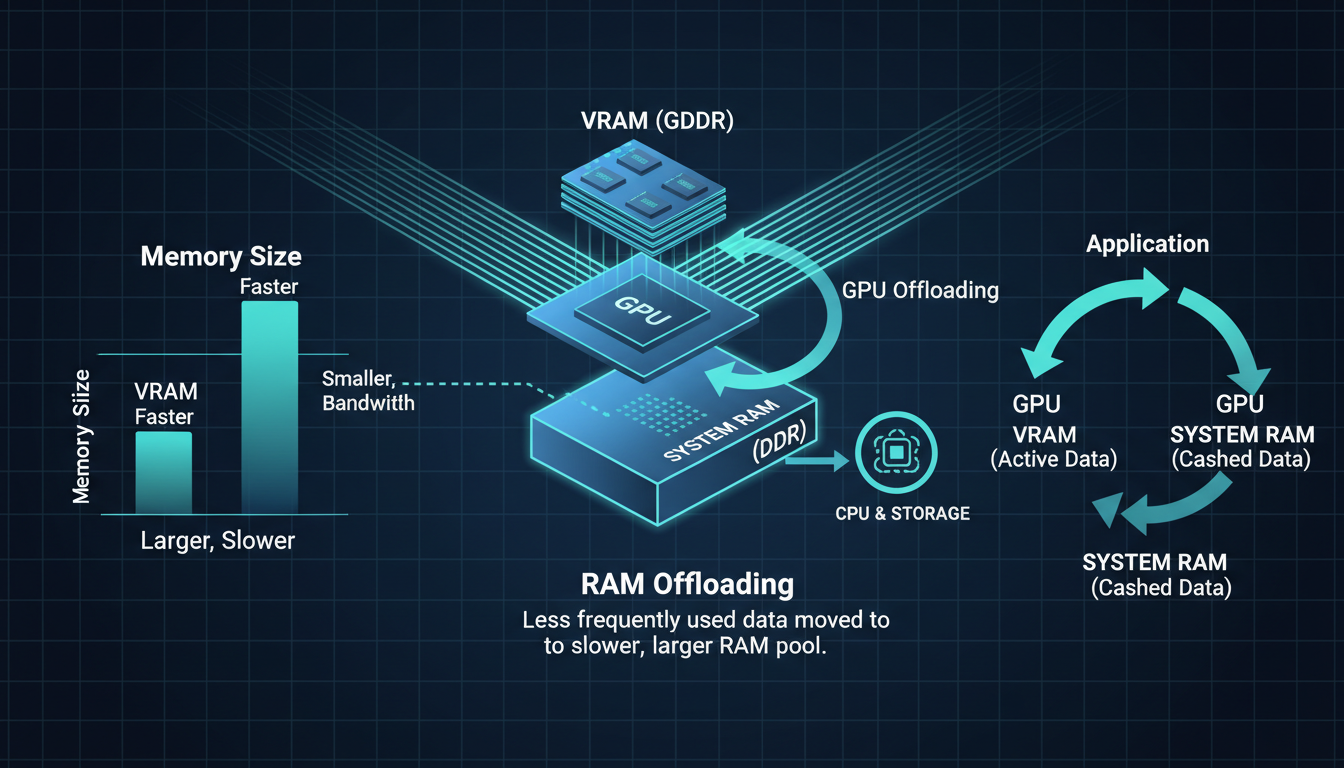

Understanding the VRAM Optimization

WanGP's dynamic offloading moves inactive layers to system RAM, dramatically reducing VRAM needs

WanGP's dynamic offloading moves inactive layers to system RAM, dramatically reducing VRAM needs

Here's where WanGP shines. Traditional Wan 2.2 loads everything into VRAM. WanGP uses dynamic offloading:

Standard approach:

- All model weights in VRAM

- All tensors in VRAM

- Peak usage: 24GB+

WanGP approach:

- Only active layers in VRAM

- Inactive layers in system RAM

- Peak usage: 6-12GB depending on settings

The tradeoff is speed. Moving data between RAM and VRAM takes time. But for budget GPUs, speed is secondary to "works at all."

RAM Requirements

With aggressive offloading, RAM becomes important:

- 16GB RAM: Minimum, will work but may swap

- 32GB RAM: Comfortable operation

- 64GB RAM: Optimal, all offloading in RAM

I run 32GB and never hit issues. 16GB users report occasional stuttering.

Supported Models and Their VRAM Needs

WanGP supports multiple video models. Here's what each needs:

Wan 2.2 Ovi (Recommended for Low VRAM)

VRAM: 6GB minimum Quality: Good Speed: 121 frames at 720p in ~15 minutes

This is the sweet spot for budget GPUs. The quality-to-requirement ratio is unmatched.

Wan 2.2 Standard

VRAM: 8-10GB with offloading Quality: Excellent Speed: Faster than Ovi, higher quality

If you have 10GB+ VRAM, this is the better option.

Hunyuan Video

VRAM: 10GB with offloading Quality: Different aesthetic, very good Speed: Competitive with Wan

Good alternative if you prefer Hunyuan's visual style.

LTX Video

VRAM: 8GB Quality: Stylized, distinct look Speed: Fast

Lighter model, good for experimentation.

Flux Image

VRAM: 8GB Quality: Images, not video Speed: Fast

Free ComfyUI Workflows

Find free, open-source ComfyUI workflows for techniques in this article. Open source is strong.

WanGP also supports Flux image generation with multi-image conditioning.

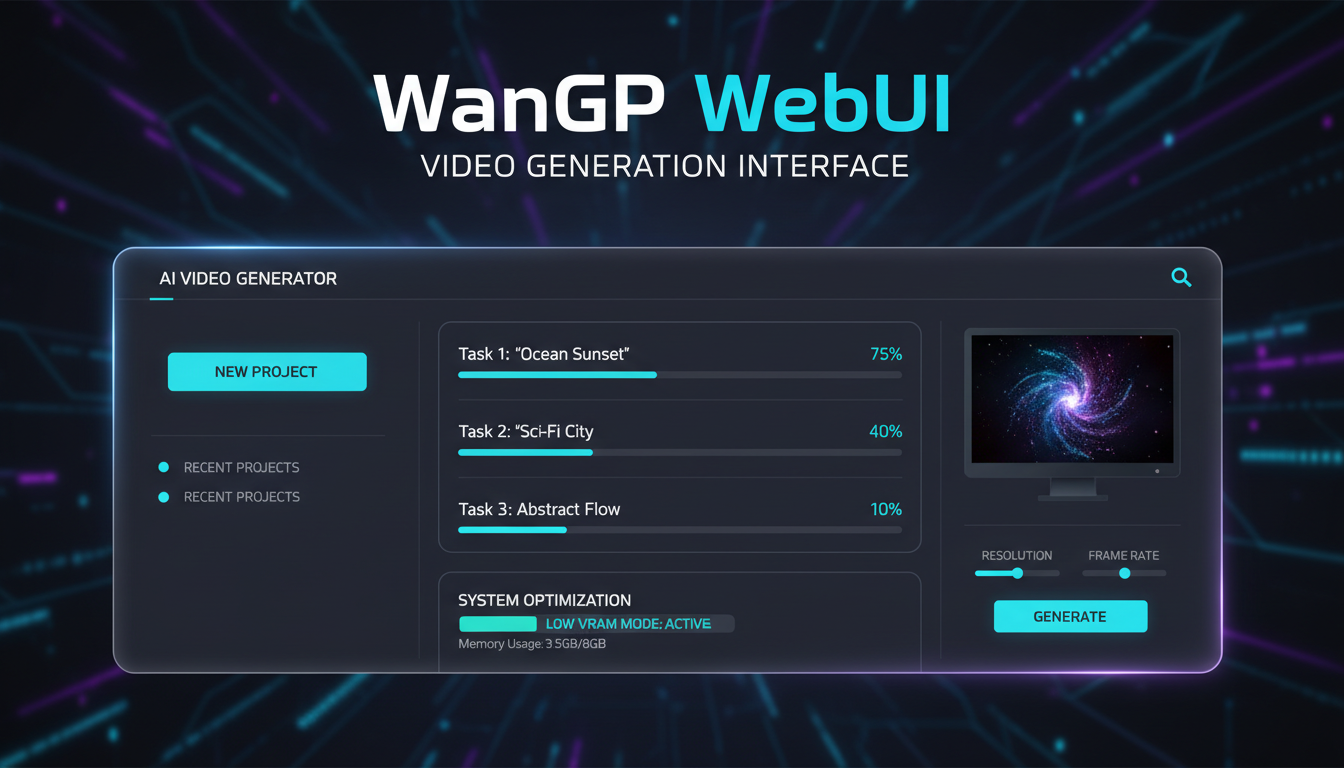

The WebUI Interface

The interface is clean and intuitive:

Main Generation Tab

- Prompt input: Describe your video

- Negative prompt: What to avoid

- Model selection: Choose your model

- Resolution: 480p, 720p, or 1080p

- Frame count: How long the video

- Seed: For reproducibility

Advanced Settings

- Steps: More = higher quality, slower

- CFG Scale: Prompt adherence

- LoRA loading: Apply style modifications

- RAM offloading: Enable for low VRAM

Processing Queue

WanGP supports queuing multiple generations. Set up a batch at night, wake up to finished videos. I do this constantly.

Real-World Performance Numbers

Here are actual generation times from my testing:

GTX 1660 Super 6GB (Wan 2.2 Ovi):

- 480p 48 frames: 8 minutes

- 720p 48 frames: 18 minutes

- 720p 121 frames: 42 minutes

RTX 3060 12GB (Wan 2.2 Standard):

- 480p 48 frames: 3 minutes

- 720p 48 frames: 7 minutes

- 720p 121 frames: 18 minutes

RTX 4080 16GB (Wan 2.2 Standard):

- 480p 48 frames: 1 minute

- 720p 48 frames: 2.5 minutes

- 720p 121 frames: 6 minutes

The 6GB card is slow, no denying it. But it works. That's the key.

Sample output quality from WanGP Wan 2.2 generation on budget hardware

Sample output quality from WanGP Wan 2.2 generation on budget hardware

Image-to-Video Workflow

One of WanGP's best features is Image-to-Video generation:

- Generate or upload a starting image

- Write a motion prompt ("camera pans right, subject walks forward")

- Configure settings

- Generate

The starting image provides strong guidance. Results are more consistent than pure text-to-video.

For the best results, I combine this with Qwen Image 2512 for the initial frame generation.

Want to skip the complexity? Apatero gives you professional AI results instantly with no technical setup required.

MMAudio Integration

This is cool. WanGP integrates MMAudio to add automatic audio tracks:

- Generate your video

- Enable MMAudio processing

- The AI analyzes the video content

- Generates matching audio/music

It's not perfect, but it's often surprisingly appropriate. Footsteps sync with walking, music matches mood. For quick social content, it saves significant editing time.

MMAudio needs about 6GB VRAM separately. WanGP handles this by unloading video models first.

VACE ControlNet Support

For advanced users, WanGP supports VACE ControlNet:

- Pose control

- Depth guidance

- Edge detection

This gives precise control over motion and composition. Essential for character animation work.

The Wan 2.2 VACE guide covers the concepts, and they apply similarly in WanGP.

Headless Mode for Stability

If you're experiencing Gradio crashes or want to run overnight:

- Create your queue in the normal interface

- Save the queue configuration

- Run

python app.py --headless

The queue processes without the web interface. More stable for long batches.

I use this for overnight generation. Set up 20 videos, go to sleep, wake up to finished content.

Extended Flux Multi-Image Conditioning

Recent WanGP updates added multi-image conditioning for Flux:

- Provide 2-4 reference images

- Flux generates new images incorporating elements from all

- Great for style consistency or character reference

This is powerful for creating consistent characters across multiple generations.

GPU and CPU Monitoring

WanGP includes real-time monitoring:

Earn Up To $1,250+/Month Creating Content

Join our exclusive creator affiliate program. Get paid per viral video based on performance. Create content in your style with full creative freedom.

- GPU usage percentage

- VRAM consumption

- CPU usage

- RAM consumption

- Temperature readings

This helps identify bottlenecks. If your GPU is at 100% but CPU is idle, you're GPU-bound. If CPU is maxed with GPU waiting, you're likely memory-bandwidth limited.

Troubleshooting Common Issues

"Out of Memory" Errors

Solution 1: Enable maximum RAM offloading Solution 2: Reduce frame count Solution 3: Lower resolution Solution 4: Use Wan 2.2 Ovi instead of standard

Slow Generation Speed

Solution 1: Check RAM offloading isn't swapping to disk Solution 2: Close other VRAM-using applications Solution 3: Accept that low VRAM means slow generation

Gradio Interface Crashes

Solution 1: Use headless mode Solution 2: Reduce batch size Solution 3: Update to latest version

Colors Look Wrong

Solution: Check color space settings. Some monitors interpret video colorspace differently.

Comparing WanGP to ComfyUI

Both run Wan models. Here's when to use each:

Use WanGP when:

- You want simplicity

- Low VRAM is critical

- You prefer queued batch processing

- You don't need complex workflows

Use ComfyUI when:

- You need maximum control

- You want to combine with other nodes

- You're building complex pipelines

- You have adequate VRAM

I use both. WanGP for quick generations and batches, ComfyUI for Wan 2.2 for complex workflows.

My Low-VRAM Workflow

Here's how I actually use WanGP on limited hardware:

- Queue overnight batches: I create prompts throughout the day, queue them before bed

- Generate at 480p first: Quick previews to check if prompts work

- Upscale winners: Take good 480p outputs and regenerate at 720p

- Use image-to-video: More consistent than pure text-to-video

- Process audio separately: MMAudio after video is complete

This workflow maximizes output from limited hardware.

Frequently Asked Questions

Can I really run this on 6GB VRAM?

Yes, with Wan 2.2 Ovi and maximum offloading. It's slow but functional.

How does it compare to cloud services?

Quality is identical. It's the same models. Speed depends on your hardware versus their infrastructure.

Can I use this commercially?

Check individual model licenses. Wan 2.2 is permissive, but verify for your use case.

Does it work on AMD GPUs?

Currently NVIDIA-focused. AMD support is limited.

How much disk space do I need?

About 30-50GB for models, plus space for outputs.

Can I add custom LoRAs?

Yes, WanGP supports loading LoRAs for style modifications.

What's the longest video I can generate?

Theoretically unlimited with the SVI extension. Practically, 10-30 seconds is common.

Does it work on Mac?

Limited support. NVIDIA GPUs on Windows/Linux work best.

How do I update to new versions?

git pull in the installation directory, then update dependencies.

Can I batch-generate multiple videos overnight?

Yes. WanGP supports queued generation through its batch interface. Set up a prompt list, configure your settings once, and let it run. On low-VRAM setups this is the most efficient way to produce volume without sitting at the keyboard.

Tips for Best Results

Prompt Engineering

Be specific about motion:

A woman walks through a forest, camera follows from behind,

sunlight filtering through trees, gentle breeze moves leaves

Use Negative Prompts

Include common video artifacts:

blurry, choppy motion, flickering, distorted, low quality

Optimal Settings for Low VRAM

Resolution: 720p

Frames: 48-72

Steps: 25

CFG: 7

RAM Offload: Maximum

Seed Management

Save good seeds. When you find something that works, that seed + similar prompts often produces good results.

Wrapping Up

WanGP democratizes AI video generation. Hardware that was "impossible" now produces usable results. The quality gap between 6GB and 24GB systems has narrowed significantly.

Is it as fast as running on a 4090? No. Is the quality as high as Runway or Kling? Close, but not quite. Is it free, local, and actually accessible? Absolutely.

If you've been holding off on AI video because of hardware costs, WanGP removes that barrier. Give it a try. You might be surprised what your "inadequate" GPU can actually produce.

Ready to Create Your AI Influencer?

Join 115 students mastering ComfyUI and AI influencer marketing in our complete 51-lesson course.

Related Articles

AI Video Denoising and Restoration: Complete Guide to Fixing Noisy Footage (2025)

Master AI video denoising and restoration techniques. Fix grainy footage, remove artifacts, restore old videos, and enhance AI-generated content with professional tools.

AI Video Generator Comparison 2025: WAN vs Kling vs Runway vs Luma vs Apatero

In-depth comparison of the best AI video generators in 2025. Features, pricing, quality, and which one is right for your needs including AI capabilities.

AI Video Multi-Clip Editing: Complete Workflow for Smooth Transitions (2025)

Master multi-clip AI video editing workflows. Learn to combine LTX-2, WAN, and Hunyuan clips into cohesive videos with smooth transitions and consistent style.