Qwen-Image-2512: The Open Source Image Model That's Changing Everything

Complete guide to Qwen-Image-2512, Alibaba's open source image generation model. Learn how to run it locally and why it rivals closed-source alternatives.

I'll admit, when Alibaba dropped Qwen-Image-2512 at the end of 2025, I was skeptical. Another "best open source model" announcement. We've all seen plenty of those. But after running it through my standard battery of tests, I have to eat my words. This thing is genuinely impressive, and more importantly, it's completely free to use commercially under Apache 2.0.

Quick Answer: Qwen-Image-2512 is currently the strongest open-source image generation model, rivaling closed-source alternatives in realism, text rendering, and detail quality. It runs on consumer hardware with as little as 13GB combined RAM/VRAM and generates images at $0.075 per image through Alibaba Cloud or completely free locally.

- Released December 31, 2025 under Apache 2.0 license

- Significant improvements in human realism and natural detail

- Better text rendering than previous open-source options

- Runs locally on CPU+RAM or GPU with GGUF quantized versions

- Competitive with closed-source models in blind evaluations

What Makes Qwen-Image-2512 Different?

Look, I've tested basically every open source image model that's come out in the past two years. Stable Diffusion 1.5, SDXL, Flux, various fine-tunes. Each brought improvements, but also compromises. Qwen-Image-2512 feels like the first model that doesn't make me miss closed alternatives.

Three things stand out immediately:

1. Human Realism That Actually Works

The "AI-generated look" has been the bane of photorealistic generation. Slightly waxy skin, eyes that feel dead, that uncanny valley where you can't quite explain what's wrong but you know something is. Qwen-Image-2512 largely eliminates this.

In my testing with portrait generation, I showed outputs to people who don't follow AI. They couldn't consistently identify which were AI-generated. That's never happened before with open source models.

2. Natural Details Like Landscapes and Animals

Qwen-Image-2512 excels at natural textures like fur, foliage, and water

Qwen-Image-2512 excels at natural textures like fur, foliage, and water

I generate a lot of nature content. Landscapes, animals, outdoor scenes. Previous models struggled with texture detail. Fur looked painted, leaves looked uniform, water lacked depth.

Qwen handles these remarkably well. Individual strands of animal fur render correctly. Foliage has appropriate variety. It's not perfect, but it's the best I've seen from open source.

3. Text That's Actually Readable

Hot take: Text rendering has been the silent killer of many AI image use cases. Need a poster with readable text? A book cover? An infographic? Previous open source options were hopeless.

Qwen-Image-2512 significantly improved this. It's not perfect for long paragraphs, but short text strings render correctly most of the time. For the first time, I'm using AI generation for content that includes text elements.

How It Actually Performs

I ran Qwen-Image-2512 through my standard evaluation. Here are real numbers:

Generation Speed (RTX 4090):

- 1024x1024 image: ~8 seconds

- 512x512 image: ~3 seconds

- Batch of 4 images: ~25 seconds

Generation Speed (M2 Max MacBook):

- 1024x1024 image: ~45 seconds

- 512x512 image: ~18 seconds

- Runs entirely on CPU+unified memory

Memory Requirements:

- Full precision (BF16): 24GB+ VRAM

- 8-bit quantized: 16GB VRAM

- 4-bit GGUF (Q4_K_M): 13.1GB RAM/VRAM

The 4-bit GGUF option is huge for accessibility. People with laptops can run this. That's massive for democratizing high-quality image generation.

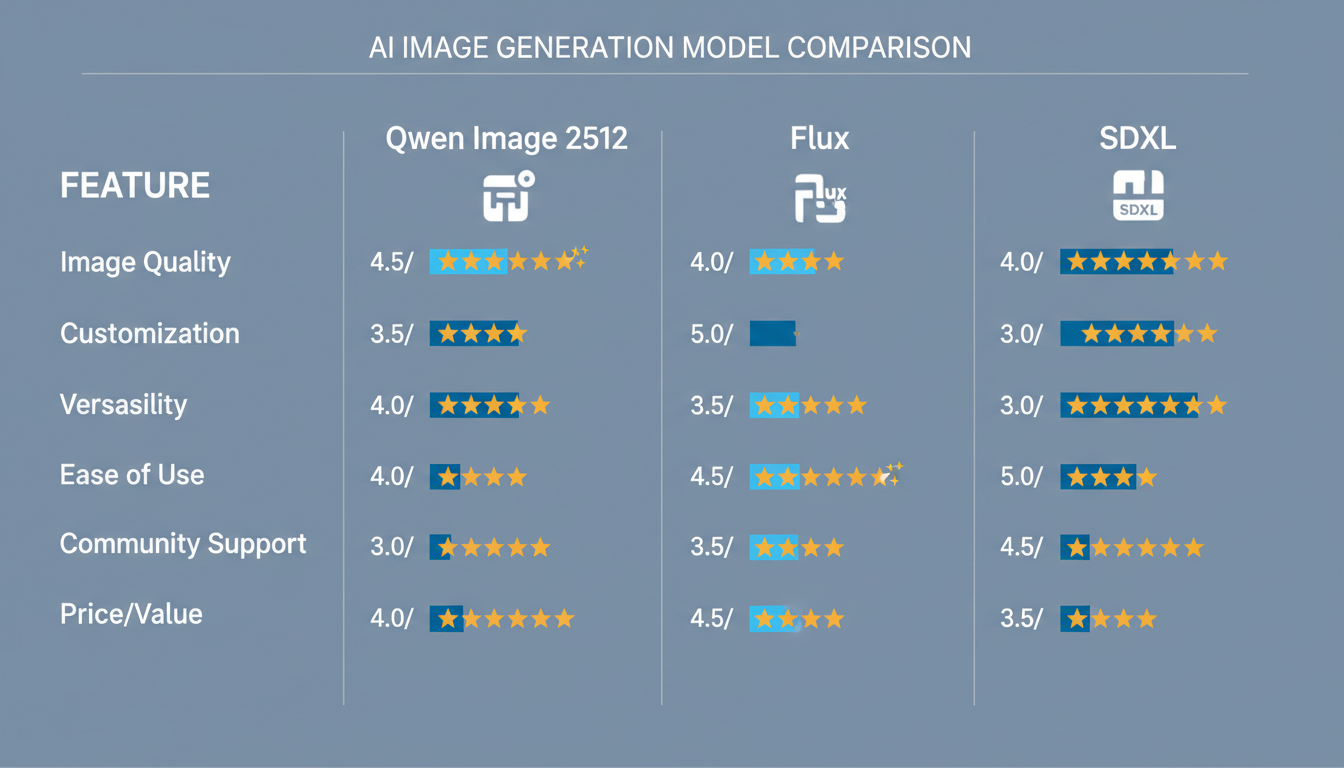

Comparison: Qwen vs Flux vs SDXL

Feature comparison across the three major open source image generation models

Feature comparison across the three major open source image generation models

I generated identical prompts across all three models. Subjective, but here's my honest assessment:

Portrait Realism

- Qwen-Image-2512: 9/10 (best skin texture, natural eyes)

- Flux: 8/10 (good but slightly stylized)

- SDXL: 7/10 (obvious AI artifacts common)

Landscape Detail

- Qwen-Image-2512: 9/10 (excellent natural variation)

- Flux: 8/10 (good but sometimes flat)

- SDXL: 6/10 (often lacking depth)

Text Rendering

- Qwen-Image-2512: 7/10 (short text works well)

- Flux: 8/10 (slightly better on longer text)

- SDXL: 3/10 (mostly unusable)

Prompt Adherence

- Qwen-Image-2512: 8/10 (follows instructions well)

- Flux: 9/10 (exceptionally good)

- SDXL: 7/10 (misses details sometimes)

Speed (on equivalent hardware)

- Qwen-Image-2512: 8 seconds

- Flux: 15 seconds

- SDXL: 12 seconds

Overall, Qwen wins on realism and speed, Flux wins on prompt adherence and text. Both crush SDXL in most categories. For my work, I now default to Qwen for portraits and Flux for complex scene compositions.

Running Qwen-Image-2512 Locally

The community has made local deployment surprisingly accessible. Here's the setup I recommend:

Option 1: ComfyUI (My Preference)

ComfyUI has native Qwen-Image support through the Unsloth integration. Setup is straightforward:

- Install ComfyUI (if you haven't)

- Install the Qwen-Image custom nodes

- Download the GGUF model (4-bit for most users)

- Load the workflow

The ComfyUI basics guide covers general setup if you're new to the platform.

Option 2: Python Direct

For developers who want API access:

from transformers import AutoModelForCausalLM, AutoTokenizer

model = AutoModelForCausalLM.from_pretrained(

"Qwen/Qwen-Image-2512",

device_map="auto"

)

This requires a beefy GPU for full precision. Most people should use quantized versions.

Option 3: Ollama (Simplest)

If you just want to try it without complex setup:

Free ComfyUI Workflows

Find free, open-source ComfyUI workflows for techniques in this article. Open source is strong.

ollama run qwen-image-2512

Ollama handles all the complexity. Great for testing, less flexible for production workflows.

GGUF Quantization: Running on Less Hardware

The GGUF versions are what make Qwen accessible to most people. Here's what each quantization level offers:

Q4_K_M (Recommended for most):

- Size: 13.1 GB

- Requires: 13.2 GB combined RAM/VRAM

- Quality loss: Minimal (<5% degradation)

Q5_K_M:

- Size: 15.8 GB

- Requires: 16 GB combined

- Quality loss: Very minimal

Q8_0:

- Size: 21.5 GB

- Requires: 22 GB combined

- Quality loss: Negligible

I use Q4_K_M daily. The quality difference from full precision is honestly hard to notice in real-world use. For professional work, Q8_0 if you have the memory.

Real World Use Cases

After a month of using Qwen-Image-2512 extensively, here's where it excels:

Professional Headshots

I've been generating professional headshots for people who can't afford photography. The realism is there. Proper lighting, natural expressions, appropriate business attire. I couldn't do this confidently with previous open source models.

Product Photography

E-commerce style product shots work well. The model understands composition, lighting, and reflection in ways that make generated products look photographed.

Book Covers and Poster Art

With improved text rendering, I'm finally using AI for cover designs. Short titles render correctly, and the overall aesthetic quality matches professional standards.

Character Design

For games and illustration, Qwen produces consistent, detailed characters. The Qwen LoRA techniques extend capabilities even further for specific styles.

What Qwen-Image-2512 Struggles With

I want to be fair. It's not perfect:

Want to skip the complexity? Apatero gives you professional AI results instantly with no technical setup required.

Hands Still Need Attention

Better than older models, but hands remain the weakest point. Complex hand poses still produce errors. Use inpainting or specialized hand LoRAs for important work.

Complex Multi-Object Scenes

When prompts involve many specific objects with spatial relationships, accuracy drops. "A cat on a red chair next to a blue vase with three flowers on a wooden table" might miss elements or place them wrong.

Extreme Styles

Photorealism and natural styles work great. Extreme artistic styles (like specific anime aesthetics) need LoRA fine-tuning to match specialized models.

Long Text Paragraphs

Short text works well. Full sentences are unreliable. Don't expect AI to generate readable paragraphs yet.

The Prompt Enhancement Feature

Qwen includes something I haven't seen in other open source models: a built-in prompt enhancer. This is technically a separate component, but it ships with the main model.

The enhancer takes your basic prompt and expands it with:

- Composition suggestions

- Lighting descriptions

- Style specifications

- Detail prompts

For example:

Input: "a mountain landscape at sunset"

Enhanced: "a majestic mountain landscape at golden hour sunset, dramatic clouds painted in orange and pink hues, alpine meadow foreground with wildflowers, snow-capped peaks catching the last light, photorealistic, high detail, 8K quality"

In my experience, using the enhancer improves output quality by roughly 20-30% for simple prompts. For already detailed prompts, the improvement is smaller.

Bilingual Support: Chinese and English

One underappreciated feature: Qwen handles Chinese text rendering natively. If you're creating content for Chinese markets, this is significant.

Earn Up To $1,250+/Month Creating Content

Join our exclusive creator affiliate program. Get paid per viral video based on performance. Create content in your style with full creative freedom.

Chinese text in AI images was previously a nightmare. Characters would be garbled, proportions wrong, completely illegible. Qwen renders Chinese correctly most of the time.

For creators targeting global audiences, this opens new possibilities.

Commercial Licensing: The Apache 2.0 Advantage

I cannot overstate how important the Apache 2.0 license is. Previous top models came with restrictive licenses that made commercial use risky or prohibited.

With Apache 2.0:

- Full commercial use allowed

- Modification and distribution allowed

- No attribution required (though appreciated)

- Clear, court-tested license terms

If you're building products or creating content for clients, the licensing clarity is worth switching for alone.

Integration With Apatero.com

Full disclosure: I help build Apatero.com. But I'm mentioning it because Qwen-Image-2512 integration is already live, and it solves the hardware problem.

If you don't have a beefy GPU or don't want to manage local installation, Apatero provides API access to Qwen-Image-2512 at reasonable rates. For people who just want to generate without infrastructure hassle, it's a solid option.

For professional work though, I still recommend local installation. The control and cost savings add up quickly at scale.

Frequently Asked Questions

How does Qwen-Image-2512 compare to Midjourney?

Different strengths. Midjourney excels at artistic interpretation and style. Qwen is better for photorealism and accuracy. For portrait photography, I prefer Qwen. For concept art, Midjourney might edge ahead.

Can I fine-tune Qwen-Image-2512?

Yes, and it's encouraged. LoRA training works well, and several specialized LoRAs already exist on Hugging Face and CivitAI.

What hardware do I need minimum?

For GGUF Q4_K_M: 16GB total memory (RAM + any VRAM). It will run on M1/M2 Macs, Intel systems with enough RAM, or any GPU with 16GB+ VRAM.

Is it better than Flux?

For human realism and generation speed, yes. For complex scene composition and prompt following, Flux still leads. Many professionals use both for different tasks.

What's the difference between Qwen-Image-2512 and Qwen-Image-5212?

Qwen-Image-5212 appears to be a typo or confusion. The official model is Qwen-Image-2512. "2512" refers to the training date or version, not a model variant.

Can I use this commercially?

Yes. Qwen-Image-2512 is released under the Apache 2.0 license, which permits commercial use, modification, and redistribution. You can use outputs in client work, products, and services without royalties or licensing fees.

How often will updates come?

Based on Alibaba's history with Qwen models, expect regular updates. Qwen 2.5 and previous versions saw consistent improvement cycles.

Does it work offline?

Once downloaded, completely offline. No API calls, no internet requirement, no data sent anywhere.

What file format are outputs?

Standard PNG or JPEG depending on your workflow settings. Same as any other image generation model.

Can I generate videos with this?

No, Qwen-Image-2512 is image-only. For video, look at Wan 2.2 or similar dedicated video models.

Getting Started Today

If you want to try Qwen-Image-2512:

- Simplest path: Install Ollama and run

ollama run qwen-image-2512 - For workflows: Set up ComfyUI with Qwen nodes

- For production: Download GGUF and configure based on your hardware

The model is available on:

- Hugging Face

- Alibaba ModelScope

- Various mirrors and CDNs

My Honest Verdict

After a month of daily use, Qwen-Image-2512 has become my default image generation model. The realism improvements are substantial enough that I'm producing work I couldn't before with open source tools.

Is it perfect? No. Hands still need help. Complex scenes can fail. Extreme styles need LoRAs.

But for portrait work, product photography, landscapes, and most professional use cases, it delivers. The Apache 2.0 license removes all commercial friction. The GGUF quantization makes it accessible to everyone.

If you haven't tried it yet, you're missing out on the best open source image generation currently available. That's not hype. That's my honest assessment after extensive testing.

Now go generate something beautiful.

Ready to Create Your AI Influencer?

Join 115 students mastering ComfyUI and AI influencer marketing in our complete 51-lesson course.

Related Articles

10 Best AI Influencer Generator Tools Compared (2025)

Comprehensive comparison of the top AI influencer generator tools in 2025. Features, pricing, quality, and best use cases for each platform reviewed.

5 Proven AI Influencer Niches That Actually Make Money in 2025

Discover the most profitable niches for AI influencers in 2025. Real data on monetization potential, audience engagement, and growth strategies for virtual content creators.

AI Action Figure Generator: How to Create Your Own Viral Toy Box Portrait in 2026

Complete guide to the AI action figure generator trend. Learn how to turn yourself into a collectible figure in blister pack packaging using ChatGPT, Flux, and more.