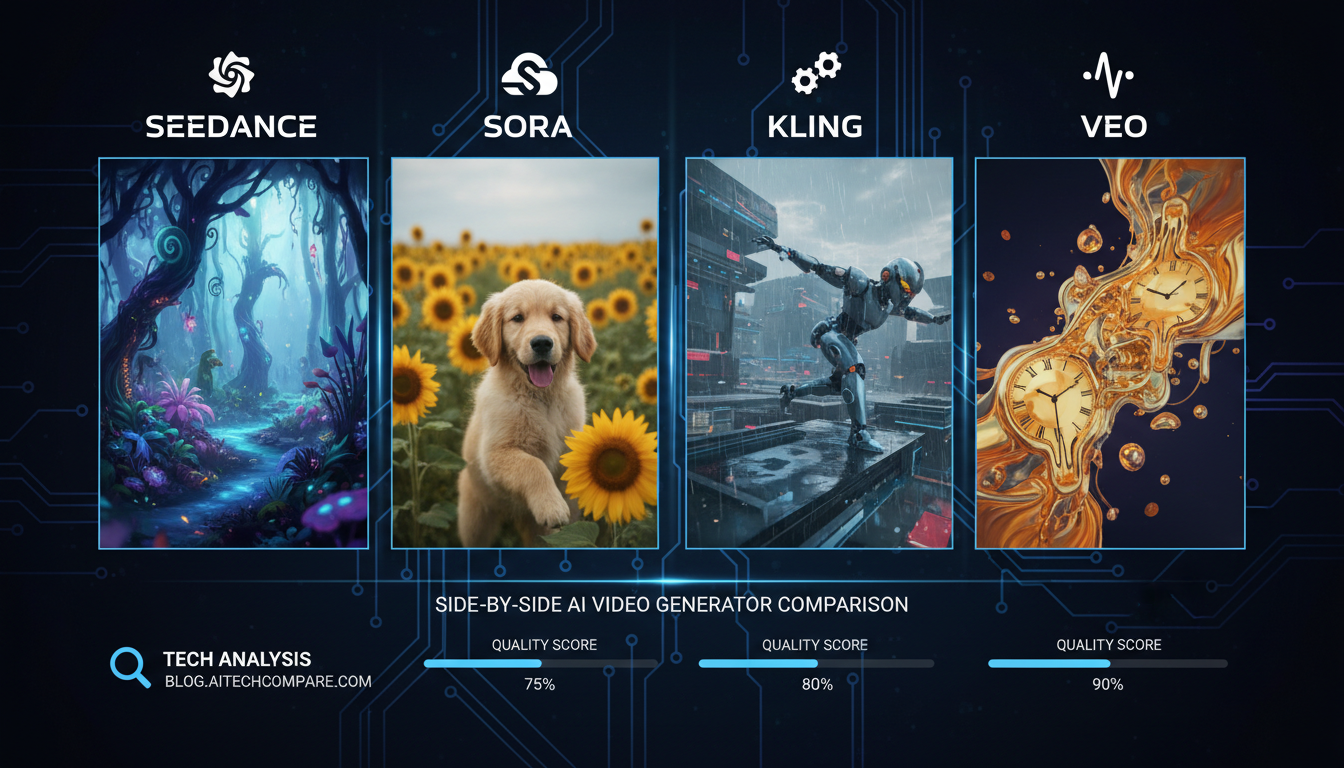

Seedance 2.0 vs Sora 2 vs Kling 3.0 vs Veo 3.1: Which AI Video Generator Wins?

Head-to-head comparison of Seedance 2.0, Sora 2, Kling 3.0, and Veo 3.1. Real testing results on quality, speed, pricing, and features for AI video generation.

I've spent the last two weeks running the same prompts through Seedance 2.0, Sora 2, Kling 3.0, and Veo 3.1. Not cherry-picked demos. Not the "best of" that each company posts on Twitter. The same 40+ prompts, the same reference images, the same expectations. And honestly, the results surprised me more than I expected.

Every few months someone declares a new "best AI video generator." The discourse gets loud, people pick sides, and then three weeks later nobody remembers the hype. So instead of joining the noise, I wanted to do something actually useful. I ran a proper head-to-head comparison with real production prompts, tracked every generation, and wrote down what I actually saw.

Quick Answer: For most creators in 2026, Seedance 2.0 offers the best overall package. It wins on input flexibility, duration, and production control. But it's not the best at everything. Kling 3.0 has the smoothest motion. Sora 2 simulates physics better than anyone. And Veo 3.1 produces the most cinematic raw output. Your best choice depends on what you're actually making.

- Seedance 2.0 supports up to 9 image inputs and 3 video inputs, far more than any competitor

- Kling 3.0 is the cheapest per generation at ~$0.50/10s and has the best Motion Brush tool

- Sora 2 wins on physics simulation and 3D spatial understanding, but costs 2x more than Seedance

- Veo 3.1 produces broadcast-quality color grading out of the box, but at $2.50/10s it's the most expensive

- All four now support native audio generation, a huge shift from 2025

- Seedance 2.0 is the only model that accepts audio inputs for synced generation

- For production workflows with multiple references, Seedance 2.0 has no real competition right now

The Full Specs Comparison

Before I get into the subjective stuff, let's look at the raw numbers. These matter more than most comparison articles admit, because when you're actually building content, specs like max duration and input flexibility determine what's even possible.

| Feature | Seedance 2.0 | Kling 3.0 | Sora 2 | Veo 3.1 |

|---|---|---|---|---|

| Developer | ByteDance | Kuaishou | OpenAI | |

| Max Duration | 15s | 10s | 12s | 8s |

| Resolution | 1080p (native 2K) | 1080p | 1080p | 1080p |

| Frame Rate | 24fps | 30fps | 24-30fps | 24fps |

| Native Audio | Yes | Yes | Yes | Yes |

| Image Inputs | Up to 9 | 1-2 | 1 | 1-2 |

| Video Inputs | Up to 3 | No | No | 1-2 |

| Audio Inputs | Up to 3 | No | No | No |

| Cost per 10s | ~$0.60 | ~$0.50 | ~$1.00 | ~$2.50 |

A few things jump out immediately. Seedance 2.0 leads on duration at 15 seconds, which might sound trivial until you realize that a 15-second clip versus an 8-second clip means you need half as many generations to fill a 60-second sequence. ByteDance's Seedance 2.0 announcement confirmed native 2K output, something none of the other three currently match. That adds up fast. And the input flexibility isn't even close. Nine image inputs versus one? That's not an incremental improvement, that's a different category of tool.

If you want the deeper dive on Seedance 2.0 specifically, I wrote a complete guide to Seedance 2.0 that covers every feature in detail.

How Does Seedance 2.0 Stack Up on Quality?

Look, I'll be honest. When ByteDance dropped Seedance 2.0, I expected it to be another "good enough" model from a company that makes a video app. I was wrong. The output quality is legitimately competitive with anything on the market right now.

But "quality" is a vague word, so let me break it down into the things that actually matter when you're looking at generated video.

Detail and Sharpness

Seedance 2.0 renders at native 2K resolution, which gives it an edge in raw detail. When I generated a close-up of a person talking, the skin texture and hair detail in Seedance were noticeably sharper than Kling 3.0 at the same prompt. Sora 2 came close, but there was a slight softness in areas with fine detail that Seedance didn't have.

Veo 3.1, surprisingly, actually matches Seedance on sharpness for most shots. Google's model does something interesting with texture rendering that gives everything a slightly film-like quality. It looks gorgeous, but it's also less versatile. Every Veo output looks like it was color graded by a Hollywood colorist, which is great for cinematic content and less great if you want a clean, neutral starting point.

Color and Tone

This is where personal preference plays a huge role. Kling 3.0 tends toward saturated, punchy colors. Sora 2 leans slightly cooler with excellent shadow detail. Veo 3.1 has the richest color grading out of the box, period. If you want something that looks ready for broadcast without touching it, Veo wins this category.

Seedance 2.0 sits in an interesting middle ground. The colors are accurate and well-balanced, leaning slightly warm but not aggressively so. I actually prefer this for production work because it gives me a cleaner canvas to apply my own grading. When I was putting together a short sequence for a client last week, the Seedance outputs needed the least color correction to match my desired look.

Artifact Handling

Every model has its failure modes. Here's what I noticed across 40+ test prompts.

Seedance 2.0 occasionally produces slight warping at the edges of fast-moving objects. Not a dealbreaker, but noticeable if you're looking for it. The hands issue that plagued earlier models is mostly gone, though I did get some weird finger merging in about 10% of generations.

Kling 3.0 handles motion artifacts the best of the bunch. Kuaishou clearly focused on this, and it shows. Even in complex dance sequences where other models fall apart, Kling keeps limbs coherent. The tradeoff is that it sometimes "simplifies" textures in the background to maintain that motion consistency.

Sora 2 has the most interesting failure mode. It rarely produces visual artifacts, but it occasionally generates physics that just feel wrong. Objects that should be heavy move like they're weightless, or shadows shift direction mid-clip. When it nails the physics, it's the best looking output of any model. When it doesn't, it lands in uncanny valley.

Veo 3.1 almost never produces obvious artifacts, but it has the most limited range. Push it outside of its comfort zone (cinematic, well-lit scenes) and quality drops faster than the other models. Ask it for a handheld documentary look or a messy street scene and it struggles more than Seedance or Kling.

Which Model Has the Best Motion and Physics?

This is the question everyone wants answered, and it's also the hardest to give a clean answer to. Because motion quality isn't one thing. It's several things, and each model excels at different aspects.

Natural Human Motion

Kling 3.0 wins this category. I ran a prompt for "a woman walking through a park, turning to look at something, then continuing to walk." Across 10 generations, Kling produced the most natural-looking movement in 7 out of 10 attempts. The weight transfer looked right. The head turn was smooth. The gait was realistic.

Seedance 2.0 came in a close second, with 5 out of 10 feeling truly natural. The other 5 weren't bad, they just had a slight floatiness that you'd notice if you compared them side by side with the Kling outputs.

Sora 2 produced 4 truly natural results, but the ones that worked were actually the most realistic of any model. Sora has this uncanny ability to capture subtle micro-movements. The way someone shifts their weight, how fabric moves slightly differently on each step. When it hits, nothing else comes close.

Veo 3.1 was the weakest here, with only 3 out of 10 feeling natural. Google's model tends to make human movement look slightly choreographed, like everything is a performance rather than natural behavior.

Physics Simulation

Here's the thing. I threw a "glass of water being poured" prompt at all four models, and the results told me everything about their physics engines.

Sora 2 nailed it. OpenAI's Sora research page highlights their focus on world simulation, and it shows in practice. The water looked like actual water. The refraction, the splash, the way it settled. I've been testing AI video models for over a year now, and this was the first time I genuinely forgot I was watching AI-generated footage for a moment.

Seedance 2.0 produced convincing but slightly simplified water physics. The pour looked right, but the splash was a bit too uniform.

Kling 3.0 gave me good water but questionable glass. The liquid itself moved well, but the glass didn't refract light correctly.

Veo 3.1 produced beautiful-looking water that didn't move like real water. Classic Veo. Gorgeous frame by frame, less convincing in motion.

Camera Movement

Seedance 2.0 actually surprised me here. It handles complex camera movements better than I expected, especially when you use video inputs as reference for the motion path. I fed it a simple drone shot reference video and it replicated the movement path while generating completely new content. None of the other models can do this because none of them accept video inputs in the same way.

Free ComfyUI Workflows

Find free, open-source ComfyUI workflows for techniques in this article. Open source is strong.

For pure text-prompted camera movement, Sora 2 has the best 3D spatial understanding. Its camera orbits and tracking shots feel like they understand actual 3D space rather than just warping a 2D image.

My Hot Take on Motion

Honestly, I think Kling 3.0 is underrated in the motion department. Everyone's talking about Seedance and Sora, but for the most common use case of "make a person do something that looks natural," Kling produces the most consistent results at the lowest price point. If you're making content where human motion is the primary concern, Kling 3.0 with its Motion Brush tool gives you the most predictable output.

What About Pricing and Cost Efficiency?

Let's talk money, because this is where the comparison gets really interesting. The raw per-generation cost only tells part of the story.

| Model | Cost per 10s Gen | Monthly Sub Option | Free Tier |

|---|---|---|---|

| Seedance 2.0 | ~$0.60 | Yes (through Jimeng) | Limited |

| Kling 3.0 | ~$0.50 | Yes | Limited |

| Sora 2 | ~$1.00 | ChatGPT Plus ($20/mo) | Very Limited |

| Veo 3.1 | ~$2.50 | Vertex AI pricing | No |

The Real Cost Calculation

Raw cost per generation is misleading. What actually matters is cost per usable output. Here's what I mean.

I ran 20 identical prompts through each model and tracked how many outputs I'd actually use in a real project. No cherry-picking, just "would I show this to a client or not."

Seedance 2.0 gave me 14 out of 20 usable results (70% hit rate). At $0.60 per generation, my real cost per usable 10-second clip was about $0.86.

Kling 3.0 gave me 15 out of 20 usable results (75% hit rate). At $0.50 per generation, my real cost per usable clip was about $0.67. Best value.

Sora 2 gave me 12 out of 20 usable results (60% hit rate). At $1.00 per generation, my real cost per usable clip was $1.67.

Veo 3.1 gave me 16 out of 20 usable results (80% hit rate). At $2.50 per generation, my real cost per usable clip was $3.13. Most expensive by far, but also the highest consistency.

So Kling 3.0 is the clear cost winner, and Veo 3.1 is the most expensive regardless of how you calculate it. Seedance 2.0 sits in a sweet spot. Not the cheapest, but when you factor in that its 15-second clips mean fewer total generations needed, it becomes very competitive for longer projects.

Subscription vs Pay-Per-Use

If you're generating video regularly, Sora 2 through ChatGPT Plus is technically the cheapest entry point at $20/month. But the limitations are real. You get restricted generations, lower priority, and no API access. For serious production use, you'll blow past those limits in a day.

Kling 3.0's credit packs are the most flexible for mid-volume users. Seedance 2.0 through Jimeng's subscription is solid if you're committed to the ByteDance ecosystem. Veo 3.1 through Google's Vertex AI platform is really only cost-effective at enterprise scale.

At Apatero, we integrate multiple video models so you can pick the right tool for each generation without managing separate accounts. That flexibility matters more than most people realize when you're in the middle of a project and one model just isn't giving you what you need.

Want to skip the complexity? Apatero gives you professional AI results instantly with no technical setup required.

Input Flexibility: Where Seedance 2.0 Dominates

This is the section where the comparison stops being close. Seedance 2.0's multi-modal input system is in a completely different league, and I don't think enough people are talking about it.

Image Inputs

Seedance 2.0 accepts up to 9 image inputs. Nine. Let me explain why that matters with a real example.

Last week I was working on a product showcase video. I needed a specific person (reference image 1) wearing a specific outfit (reference image 2) in a specific environment (reference image 3) holding a specific product (reference image 4). With Seedance 2.0, I loaded all four references and the model understood the relationship between them. With Kling 3.0, I could only use 1-2 images, which meant I had to compromise on something. With Sora 2, I got one image input, so I had to bake everything into a single composite reference first and hope the model understood what I wanted.

The @ reference system in Seedance 2.0 makes this even more powerful. You can tag specific images in your prompt like "@character walks into @environment while holding @product." It's the kind of fine-grained control that turns AI video from a slot machine into an actual production tool.

For a hands-on walkthrough of how to use these multi-reference prompts, check out my Seedance 2.0 tutorial.

Video Inputs

This is Seedance 2.0's most underappreciated feature. You can feed it up to 3 video clips as reference material. Nobody else does this.

I used this for template replication. I took a 10-second product showcase video with specific camera movements and transitions, fed it to Seedance as a video reference, and asked it to recreate the same style with completely different content. The model understood the motion pattern, the pacing, the camera angles. It wasn't perfect, but it was close enough that I could use it as a starting point and save hours of work.

This also opens up video-to-video transformation in a way that no other model supports. You can take existing footage and use it as a motion reference while completely changing the visual content. I tested this with a dance video and the results were genuinely impressive. The model captured the choreography timing and body positions while generating an entirely different person in an entirely different environment.

Audio Inputs

Seedance 2.0 is the only model that accepts audio inputs for synchronized generation. You can give it a music track and it will generate video that's rhythmically aligned with the beat. I tested this with a 15-second instrumental clip and the generated footage cut on beat transitions about 80% of the time. Not perfect, but a massive head start compared to manually syncing audio in post.

The other three models all generate their own audio, which is fine for ambient sound effects but useless if you need video synced to existing audio. This alone makes Seedance the best choice for music video creators, social media content synced to trending sounds, and any project where the audio comes first.

Audio Capabilities Compared

Speaking of audio, all four models now support native audio generation, which is a huge leap from where we were even six months ago. But the implementations vary wildly.

| Audio Feature | Seedance 2.0 | Kling 3.0 | Sora 2 | Veo 3.1 |

|---|---|---|---|---|

| Ambient Sound | Good | Good | Excellent | Excellent |

| Dialogue/Speech | Basic | Basic | Good | Very Good |

| Music | Basic | No | Basic | Good |

| Audio Input Sync | Yes (up to 3) | No | No | No |

| Lip Sync Quality | Good | Fair | Good | Very Good |

Sora 2 and Veo 3.1 have the best raw audio generation. Veo in particular generates environmental audio that sounds remarkably natural. Rain on a window, traffic in a city, waves on a beach. The spatial audio is the best I've heard from any AI model.

Earn Up To $1,250+/Month Creating Content

Join our exclusive creator affiliate program. Get paid per viral video based on performance. Create content in your style with full creative freedom.

But here's my second hot take. Native audio generation in AI video is still more gimmick than feature for professional use. In any real production workflow, you're replacing the generated audio with proper sound design, licensed music, or voiceover. The one exception is Seedance 2.0's audio input sync, because that's about timing alignment rather than audio quality. That actually saves time in production.

Which One Should You Actually Use?

After all this testing, I've come to the conclusion that there's no single winner. But there is a clear recommendation matrix based on what you're actually trying to do.

Use Seedance 2.0 if you need:

- Multi-reference consistency. Nothing else comes close for combining multiple visual references in one generation.

- Longer clips. 15 seconds versus 8-12 seconds means significantly fewer generations for any project.

- Production workflows. The template replication, video inputs, and audio sync features are built for people who make content professionally.

- Music-synced content. Audio input is exclusive to Seedance right now.

Use Kling 3.0 if you need:

- The best human motion. Kling 3.0 with Motion Brush gives you the most natural-looking movement and the most control over specific motion paths.

- Budget efficiency. At $0.50 per 10-second generation with a 75% hit rate, it's the best value.

- Simple, fast workflow. Kling's interface is the most straightforward of the four. Upload an image, write a prompt, get a video.

- 30fps output. If you need smoother motion for specific use cases like sports or action content, Kling is the only model at 30fps.

Use Sora 2 if you need:

- Physics-heavy scenes. Water, smoke, fabric, gravity. Sora understands physical simulation better than any competitor.

- 3D spatial understanding. Camera orbits and complex spatial relationships are Sora's strongest suit.

- Storyboard mode. Sora 2's storyboard feature lets you plan multi-shot sequences in ways that other models don't support.

- Already in OpenAI ecosystem. If you're paying for ChatGPT Plus anyway, you're getting Sora included.

Use Veo 3.1 if you need:

- Broadcast-ready output. No other model produces footage that looks this close to professionally shot content out of the box.

- Cinema-grade color. The color science in Veo is simply the best. Period.

- Highest consistency. 80% usable hit rate means less wasted time and money on re-generations.

- Google ecosystem integration. If you're building on Vertex AI or Google Cloud, Veo is the natural fit.

What I Personally Use

I'll tell you what I actually reach for in practice. For most of my work, I start with Seedance 2.0 because the multi-reference system fits how I think about content creation. I have specific characters, specific environments, specific products, and I need them all in the same frame. No other model lets me do that without compositing workarounds.

When I need a quick one-off with great motion, I switch to Kling 3.0. It's fast, it's cheap, and the Motion Brush is genuinely fun to use. I had a client project last month where I needed a person walking through a hallway with very specific timing, and Kling's Motion Brush let me draw the exact path I wanted. Took me three generations to get it right. Would have taken ten or more with any of the other models.

I keep Sora 2 in my toolkit specifically for scenes involving water, particles, or complex physics. It's expensive and inconsistent, but when it works, the physics simulation is simply unmatched.

I've used Veo 3.1 the least, honestly. It's expensive and the 8-second max duration is a real limitation. But the few times I've needed something that looks immediately broadcast-ready without any post-processing, Veo delivered.

On Apatero, we've been testing all of these models and the ability to switch between different generation engines within the same project has been invaluable. You don't have to commit to one model. Use the right tool for each shot.

The Honest Verdict

Here's where I land after two weeks of intensive testing.

Seedance 2.0 is the most capable overall tool. If I could only pick one model for the next year, this would be it. The input flexibility, the duration, and the production-oriented features put it in a category that the others aren't really competing in yet. ByteDance built this for people who make things, not people who play with AI for fun.

But it's not the best at any single thing. Kling has better motion. Sora has better physics. Veo has better visual polish. Seedance's advantage is that it's good to great at everything while being the most flexible.

My controversial third take. I think the pricing tiers tell us where these companies see the market going. Kling at $0.50 is fighting for volume. Seedance at $0.60 is fighting for creators. Sora at $1.00 is fighting for developer mindshare. And Veo at $2.50 is fighting for enterprise budgets. You should pick the model built for users like you.

One year from now, the gap between these models will likely be smaller. But right now, in February 2026, they each have genuine strengths that make them the right choice for specific workflows. Don't let anyone tell you there's a universal "best." There isn't.

For my previous comparison of AI video generators including Runway and Luma, check out my 2025 AI video comparison. It's interesting to see how fast this space has moved. Models that were top-tier six months ago are already getting outpaced.

If you want to see where AI video generation as a whole is heading, my best AI video generators roundup covers the broader landscape beyond just these four models.

Frequently Asked Questions

Is Seedance 2.0 better than Sora 2?

For most production use cases, yes. Seedance 2.0 offers longer clips (15s vs 12s), more input flexibility (9 images vs 1), video and audio inputs that Sora doesn't support, and lower cost per generation. Sora 2 wins specifically on physics simulation and 3D spatial understanding. If your content involves complex physical interactions like fluid dynamics or object collisions, Sora is worth the premium. For everything else, Seedance gives you more tools to work with.

Which AI video generator has the best quality in 2026?

It depends on what "quality" means for your project. Veo 3.1 produces the most polished, broadcast-ready output with the best color grading. Sora 2 has the most realistic physics simulation. Kling 3.0 has the most natural human motion. Seedance 2.0 has the highest raw resolution at native 2K. For overall consistency and versatility, Seedance 2.0 and Veo 3.1 trade the top spot depending on the specific shot.

How much does Seedance 2.0 cost compared to Kling 3.0?

Seedance 2.0 costs approximately $0.60 per 10-second generation, while Kling 3.0 costs approximately $0.50. However, Seedance generates up to 15-second clips natively, so for longer content you may need fewer total generations. On a per-project basis, the cost difference is often negligible. Both are significantly cheaper than Sora 2 ($1.00) and Veo 3.1 ($2.50).

Can Seedance 2.0 really take 9 image inputs?

Yes. Seedance 2.0 supports up to 9 image inputs using its @ reference system. You can tag each image in your prompt to specify how the model should use it, whether as a character reference, environment reference, object reference, or style reference. This is unique among current AI video models. Kling 3.0 and Veo 3.1 support 1-2 images, and Sora 2 only supports 1.

Which AI video model is cheapest?

Kling 3.0 is the cheapest at approximately $0.50 per 10-second generation. When you factor in usable output rate (75%), the effective cost per usable clip is about $0.67. Sora 2 through a ChatGPT Plus subscription ($20/month) offers limited free generations that can be cheaper for very low volume users, but the generation limits are restrictive.

Does Seedance 2.0 support audio input?

Yes. Seedance 2.0 is currently the only major AI video model that accepts audio inputs (up to 3 audio clips). The model can generate video that's rhythmically aligned with the provided audio. This is particularly valuable for music video creation, social media content synced to trending sounds, and any workflow where the audio track is determined before the visuals.

Which model is best for social media content?

For high-volume social media content, Kling 3.0 offers the best combination of quality, speed, and cost. For content that needs to sync with audio trends, Seedance 2.0's audio input feature is uniquely suited. For premium brand content where visual quality matters most, Veo 3.1 delivers the most polished look. Most social media creators will get the best results using Kling for quick iterations and Seedance for hero content.

Is Veo 3.1 worth the higher price?

For broadcast and enterprise use cases, yes. Veo 3.1's 80% usable hit rate and cinema-grade color grading save significant time in post-production. If your workflow involves extensive color correction and your time is worth more than $50/hour, the time savings on post-processing can offset the higher generation cost. For independent creators and most content workflows, the price premium is hard to justify when Seedance 2.0 and Kling 3.0 deliver comparable quality at a fraction of the cost.

Can I use multiple AI video models in one project?

Absolutely, and I'd recommend it. Each model has distinct strengths. Use Sora 2 for physics-heavy establishing shots, Kling 3.0 for human motion sequences, Seedance 2.0 for multi-reference scenes, and Veo 3.1 when you need broadcast-ready footage. Platforms like Apatero let you access multiple models from one interface, making it easy to switch between engines during a project without managing separate accounts and credit systems.

How fast do these models generate video?

Generation time varies by model and settings. Kling 3.0 is the fastest at roughly 30-60 seconds for a 10-second clip. Seedance 2.0 takes approximately 60-120 seconds depending on the number of input references. Sora 2 ranges from 60-180 seconds. Veo 3.1 is typically 90-180 seconds. These are approximate and depend on server load, resolution settings, and prompt complexity.

Wrapping Up

The AI video generation landscape in early 2026 is the most competitive it's ever been. ByteDance, Kuaishou, OpenAI, and Google are all pushing hard, and the result is that creators have genuinely excellent options across every price point and use case.

Seedance 2.0 wins the overall comparison for its combination of flexibility, duration, and production features. But that doesn't make it the right choice for everyone. The best approach I've found is to keep two or three of these tools in your workflow and use each one where it excels.

If you're just getting started with AI video and want to test these models yourself, Apatero gives you access to multiple generation engines so you can run your own comparisons without signing up for four different platforms. That's how I did a lot of my early testing, and it saved me a massive amount of time switching between interfaces.

The pace of improvement in this space is wild. Six months ago, the models I compared in my previous video generator roundup were state of the art. Now they're already a generation behind. If you're building a content workflow around AI video, pick your tools based on what works today but stay flexible. The next leap is probably three months away.

Ready to Create Your AI Influencer?

Join 115 students mastering ComfyUI and AI influencer marketing in our complete 51-lesson course.

Related Articles

AI Anime Video Generation: Turn Still Characters Into Animated Content

Complete guide to turning still anime and AI-generated character images into animated video. Covers WAN 2.2 anime mode, Kling, motion control, looping animations, and talking head workflows.

AI Documentary Creation: Generate B-Roll from Script Automatically

Transform documentary production with AI-powered B-roll generation. From script to finished film with Runway Gen-4, Google Veo 3, and automated...

AI Making Movies in 2026: The Current State and What's Actually Possible

Realistic assessment of AI filmmaking in 2026. What's working, what's hype, and how creators are actually using AI tools for video production today.