Kandinsky Image-to-Video Complete Guide: 5.0 Lite to 3.1

Complete guide to Kandinsky's image-to-video models from 5.0 Lite to 3.1. Setup instructions, quality comparisons, and optimization techniques included.

Kandinsky 5.0 Lite I2V released November 15, 2025 runs on GPUs with just 12GB memory and generates 5-second videos using Flash Attention 2, Sage Attention, or SDPA. Kandinsky 4.0 offers a complete pipeline covering T2V, I2V, T2I2V, and V2A generation with an accelerated distilled version producing 12-second 480p videos in just 11 seconds on a single GPU. Both models use the Apache 2.0 license for maximum flexibility.

Kandinsky has quietly become one of the most capable open-source video generation pipelines available in late 2025. While models like Wan2.2 and HunyuanVideo dominate community discussions, Kandinsky delivers exceptional results with remarkably efficient hardware requirements and a permissive Apache 2.0 license that makes it ideal for commercial applications.

The November 15, 2025 release of Kandinsky 5.0 Lite I2V marks a significant milestone for accessible video generation. Running the entire pipeline with just 24GB of RAM through intelligent offloading means creators with mid-range hardware can finally participate in the image-to-video revolution without cloud computing costs.

Understanding the differences between Kandinsky 5.0 Lite and 4.0 determines whether you achieve frustrating experiments or reliable creative production. If you're new to ComfyUI video workflows, start with our ComfyUI basics guide and beginner's workflow guide before exploring Kandinsky specifics.

:::tip[Key Takeaways]

- Follow the step-by-step process for best results with kandinsky image-to-video complete guide: 5.0 lite to 3.1

- Start with the basics before attempting advanced techniques

- Common mistakes are easy to avoid with proper setup

- Practice improves results significantly over time :::

TL;DR Summary for Kandinsky I2V

Kandinsky 5.0 Lite I2V requires only 12GB GPU memory for 5-second generation using modern attention mechanisms and supports the entire pipeline on 24GB system RAM with offloading. The T2V Lite model has 2B parameters with Qwen 2.5VL 7B text encoding and HunyuanVideo VAE. Kandinsky 4.0 provides comprehensive T2V, I2V, T2I2V, and V2A pipelines with an accelerated distilled version generating 12-second 480p videos in 11 seconds. The simple architecture adapts easily to various generation tasks under the Apache 2.0 license. ComfyUI support has been requested by the community through Issue #10134.

Why Kandinsky Stands Out in the 2025 Video Generation space

The video generation field has exploded with options throughout 2025, yet Kandinsky maintains distinct advantages that make it worth serious consideration alongside more widely discussed alternatives.

The Kandinsky Philosophy

Kandinsky's development team prioritizes accessibility and adaptability over raw parameter counts. Rather than building the largest possible model, they focused on efficient architectures that deliver professional results on consumer hardware while remaining simple enough to adapt for various generation tasks.

This approach resonates with creators who need reliable video generation without investing in expensive cloud computing or high-end workstations.

Apache 2.0 Licensing Advantage

Unlike models with restrictive commercial clauses, Kandinsky's Apache 2.0 license permits commercial use, modification, distribution, and private use without attribution requirements in the final product. This makes it particularly attractive for businesses, freelancers, and content creators monetizing their work.

The licensing consideration often gets overlooked in model comparisons but significantly impacts real-world usability for professional applications.

Architecture Simplicity Benefits

The Kandinsky team explicitly designed their architecture for adaptability. The simple design means developers and researchers can modify the pipeline for specialized tasks without months of architecture comprehension. This has already resulted in community variants optimized for specific use cases.

For professional workflows requiring customization, this architectural accessibility provides substantial value compared to black-box alternatives. Platforms like Apatero.com recognize this flexibility potential and continue monitoring Kandinsky developments for integration opportunities.

Kandinsky 5.0 Lite I2V Detailed look

The November 15, 2025 release of Kandinsky 5.0 Lite I2V represents a new approach to accessible video generation with intelligent component selection and optimization strategies.

Core Architecture Components

| Component | Specification | Purpose |

|---|---|---|

| Text Encoder | Qwen 2.5VL 7B | Vision-language understanding for prompts |

| VAE | HunyuanVideo VAE | Video encoding and decoding |

| T2V Lite Parameters | 2 billion | Core generation model size |

| Minimum GPU Memory | 12GB | Entry-level requirement |

| Full Pipeline RAM | 24GB | With intelligent offloading |

Attention Mechanism Support

Kandinsky 5.0 Lite supports three modern attention implementations for optimal performance across different hardware configurations.

| Attention Type | Best For | Memory Efficiency | Speed |

|---|---|---|---|

| Flash Attention 2 | NVIDIA Ampere+ GPUs | Excellent | Fastest |

| Sage Attention | Broad compatibility | Very Good | Fast |

| SDPA | PyTorch native | Good | Moderate |

Flash Attention 2 provides the best performance on compatible hardware with its memory-efficient algorithm that reduces memory usage from quadratic to linear scaling. For RTX 30-series and newer GPUs, this typically enables 30-40% faster generation compared to standard attention.

Sage Attention offers excellent performance across a broader range of hardware while maintaining strong memory efficiency. For users without Ampere architecture GPUs, this represents the optimal choice balancing speed and compatibility.

SDPA through PyTorch's native scaled dot product attention provides guaranteed compatibility with any modern PyTorch installation without additional dependencies.

5-Second Generation Workflow

The standard Kandinsky 5.0 Lite I2V workflow produces 5-second video clips at reasonable quality for most creative applications. Generation time varies based on attention mechanism and hardware but typically falls between 30 seconds and 2 minutes on 12GB GPUs.

Memory Management with Offloading

The 24GB full pipeline requirement assumes system RAM offloading for model components not actively in use. This intelligent memory management allows the GPU to focus on active computations while temporarily storing other components in system memory.

For systems with 32GB or more RAM, this offloading happens transparently without performance impact. Systems with exactly 24GB should close other applications to ensure sufficient available memory during generation.

Vision-Language Text Encoding

The Qwen 2.5VL 7B text encoder brings vision-language capabilities to prompt understanding. This means the encoder comprehends not just text descriptions but can reason about visual concepts and relationships that traditional CLIP encoders miss.

Practical benefits include better understanding of spatial relationships in prompts, more accurate interpretation of complex scene descriptions, and improved consistency between prompt intent and generated output.

Kandinsky 4.0 Pipeline Comprehensive Overview

While 5.0 Lite focuses on accessible I2V, Kandinsky 4.0 provides a complete multi-modal generation pipeline covering text-to-video, image-to-video, text-to-image-to-video, and video-to-audio generation.

Generation Mode Breakdown

| Mode | Input | Output | Best Use Case |

|---|---|---|---|

| T2V | Text prompt | Video | Conceptual video from descriptions |

| I2V | Image + prompt | Video | Animating existing artwork or photos |

| T2I2V | Text prompt | Image then Video | Complete creative pipeline |

| V2A | Video | Audio | Adding sound to silent generations |

Text-to-Video Capabilities

Kandinsky 4.0 T2V generates video directly from text prompts with quality competitive with other open-source alternatives. The model handles various content types from realistic scenes to stylized artistic content.

Prompt engineering for Kandinsky T2V follows similar principles to other video models. Detailed descriptions of motion, lighting, and camera movement produce better results than simple subject descriptions.

Image-to-Video Animation

The I2V mode excels at bringing static images to life with natural motion. Feed it a photograph, illustration, or AI-generated image, and it produces video with appropriate movement based on content understanding.

Character portraits receive natural head movements and expressions. space images gain subtle environmental motion like wind through trees or flowing water. Product shots can gain rotation or demonstration movements.

Text-to-Image-to-Video Pipeline

The T2I2V mode chains generation stages for complete creative control. First generate an image from your text prompt, then animate that specific image into video.

This approach provides intermediate checkpoints where you can verify the generated image meets expectations before committing to video generation. It often produces more consistent results than direct T2V because the image stage locks in visual elements before motion is added.

Video-to-Audio Generation

The V2A capability adds appropriate audio to generated videos. While audio quality doesn't match dedicated audio models, it provides useful placeholder sound design and can produce surprisingly effective results for ambient scenes.

For professional productions requiring high-quality audio, consider this as a draft for timing reference rather than final output. For social media and casual content, the generated audio often suffices.

Accelerated Distilled Version

The accelerated distilled Kandinsky 4.0 variant represents perhaps the most impressive technical achievement in the pipeline. This optimized version generates 12-second 480p videos in just 11 seconds on a single GPU.

| Metric | Standard Model | Distilled Version | Improvement |

|---|---|---|---|

| Generation Time (12s video) | 3-5 minutes | 11 seconds | 15-30x faster |

| Resolution | 720p+ | 480p | Trade-off |

| Quality | Maximum | Very Good | Acceptable for most uses |

| Iteration Speed | Slow | Near real-time | Dramatic improvement |

This speed enables rapid iteration workflows impossible with standard video models. Test prompts, compositions, and creative directions in seconds rather than minutes.

For professional workflows at Apatero.com, this rapid iteration capability transforms video generation from a batch process to an interactive creative tool.

Hardware Requirements and System Configuration

Successful Kandinsky deployment requires understanding hardware requirements across different configurations and use cases.

Minimum Requirements for Kandinsky 5.0 Lite I2V

| Component | Minimum | Recommended | Optimal |

|---|---|---|---|

| GPU VRAM | 12GB | 16GB | 24GB |

| System RAM | 24GB | 32GB | 64GB |

| Storage | 50GB free | 100GB SSD | NVMe SSD |

| CPU | Modern 6-core | 8-core | 12-core+ |

Requirements for Kandinsky 4.0 Full Pipeline

| Component | Minimum | Recommended | Optimal |

|---|---|---|---|

| GPU VRAM | 16GB | 24GB | 48GB |

| System RAM | 32GB | 64GB | 128GB |

| Storage | 100GB free | 250GB SSD | NVMe SSD |

| CPU | 8-core | 12-core | 16-core+ |

GPU Compatibility Guide

| GPU Model | VRAM | Kandinsky 5.0 Lite | Kandinsky 4.0 | Distilled 4.0 |

|---|---|---|---|---|

| RTX 3060 | 12GB | Good | Limited | Excellent |

| RTX 3080 | 10GB | Limited | No | Good |

| RTX 3090 | 24GB | Excellent | Good | Excellent |

| RTX 4070 | 12GB | Good | Limited | Excellent |

| RTX 4080 | 16GB | Excellent | Good | Excellent |

| RTX 4090 | 24GB | Excellent | Excellent | Excellent |

For RTX 3090 optimization techniques applicable to Kandinsky workflows, see our RTX 3090 optimization guide.

Storage Considerations

Model files require significant storage space. Plan for approximately 15-20GB for Kandinsky 5.0 Lite components and 30-40GB for complete Kandinsky 4.0 pipeline including all generation modes.

Fast storage dramatically improves model loading times. NVMe SSDs load models 3-5x faster than traditional hard drives, with SATA SSDs providing intermediate performance.

Memory Offloading Configuration

For systems using RAM offloading to meet minimum requirements, configure your Python environment with appropriate memory management.

Ensure virtual memory and swap space are disabled or minimized to prevent thrashing. Close memory-intensive applications before generation. Monitor system RAM usage during generation to identify bottlenecks.

Step-by-Step Setup Guide

Getting Kandinsky running requires several installation and configuration steps. Follow this guide for both 5.0 Lite I2V and 4.0 pipeline setups.

Prerequisites Installation

Before installing Kandinsky, ensure your system has Python 3.10 or 3.11 installed along with current GPU drivers. CUDA toolkit 11.8 or newer is required for NVIDIA GPUs.

Verify your PyTorch installation includes CUDA support by checking that torch.cuda.is_available() returns True in a Python environment. For PyTorch CUDA setup assistance, see our PyTorch CUDA guide.

Kandinsky 5.0 Lite I2V Installation

Step one involves cloning the official Kandinsky repository from the Sber AI Research GitHub. This repository contains model code, configuration files, and example scripts.

Step two requires downloading model weights. The text encoder, VAE, and I2V model weights total approximately 15-20GB. Download from official Hugging Face repositories to ensure correct weights.

Step three configures the attention mechanism. For NVIDIA Ampere architecture GPUs including RTX 30-series and newer, install Flash Attention 2 through pip. For other GPUs, Sage Attention provides excellent performance with broader compatibility.

Step four verifies the installation by running the included test script with a simple prompt. Successful generation confirms correct setup.

Kandinsky 4.0 Pipeline Installation

The 4.0 installation follows similar patterns but includes additional components for the complete multi-modal pipeline.

Free ComfyUI Workflows

Find free, open-source ComfyUI workflows for techniques in this article. Open source is strong.

Download all generation mode weights including T2V, I2V, T2I2V, and V2A components. Total download size approaches 30-40GB for the complete pipeline.

Configure each generation mode according to your intended use cases. Most users can skip V2A installation initially and add it later if audio generation becomes necessary.

Accelerated Distilled Model Setup

The distilled variant requires specific model weights separate from the standard 4.0 weights. Download the distilled checkpoint explicitly rather than attempting to use standard weights with distillation flags.

Distilled model configuration optimizes for speed over maximum quality. Default settings provide the 11-second generation time for 12-second videos.

Attention Mechanism Installation

For Flash Attention 2 on compatible systems, install through pip with the flash-attn package. This requires CUDA toolkit and compatible compilers.

Sage Attention installation follows standard pip procedures with fewer compilation dependencies. Most systems can install Sage Attention without issues that sometimes affect Flash Attention compilation.

Verification Testing

Test each installed component before attempting complex workflows. Generate a simple 2-second clip with the I2V model to verify basic functionality. Test T2V, T2I2V, and V2A modes individually if installing the complete 4.0 pipeline.

Common verification issues include incorrect model paths, incompatible attention mechanisms, and insufficient memory. Error messages typically indicate the specific problem requiring resolution.

Optimization Techniques for Maximum Performance

Beyond basic installation, optimization techniques extract maximum performance and quality from Kandinsky models.

Attention Mechanism Selection Strategy

Test all supported attention mechanisms on your specific hardware. Performance differences can be substantial between Flash Attention 2, Sage Attention, and SDPA on different GPU architectures.

| Optimization | Performance Impact | Memory Impact | Compatibility |

|---|---|---|---|

| Flash Attention 2 | 30-40% faster | 40% reduction | Ampere+ |

| Sage Attention | 20-30% faster | 30% reduction | Broad |

| SDPA | Baseline | Baseline | Universal |

Memory Optimization Strategies

Enable gradient checkpointing when available to trade computation for memory. This allows generation at higher resolutions or longer durations within the same VRAM constraints.

Configure aggressive offloading for systems at minimum memory requirements. Move model components to system RAM immediately after use rather than keeping them in VRAM.

For comprehensive VRAM optimization applicable to Kandinsky and other models, reference our complete low-VRAM survival guide.

Generation Parameter Tuning

| Parameter | Conservative | Balanced | Quality-Focused |

|---|---|---|---|

| Steps | 15-20 | 25-30 | 40-50 |

| CFG Scale | 6-7 | 7-8 | 8-10 |

| Resolution | 480p | 720p | 1080p |

Conservative settings enable rapid iteration for concept testing. Balanced settings suit most production work. Quality-focused settings maximize output quality for final renders.

Batch Processing Optimization

When generating multiple videos, keep the model loaded in memory between generations. Model loading represents significant overhead that batching eliminates.

Configure generation queues that process prompts sequentially while maintaining model state. This approach generates 5-10 videos in the time it would take to generate 2-3 with repeated loading.

Hardware-Specific Tuning

Different GPU architectures respond to different optimization combinations. RTX 40-series benefits most from Flash Attention 2 with specific tensor core use settings. RTX 30-series achieves best results with Sage Attention and optimized memory allocation.

Test your specific hardware configuration with benchmark prompts to identify optimal settings before committing to production workflows.

Model Comparison: Kandinsky 5.0 Lite vs 4.0

Understanding the differences between Kandinsky versions helps select the right model for specific projects and workflows.

Architecture Comparison

| Aspect | Kandinsky 5.0 Lite I2V | Kandinsky 4.0 |

|---|---|---|

| Focus | Efficient I2V | Complete pipeline |

| Parameters (T2V) | 2B | Larger |

| Text Encoder | Qwen 2.5VL 7B | Earlier encoder |

| VAE | HunyuanVideo | Kandinsky native |

| Min GPU Memory | 12GB | 16GB |

| Generation Modes | I2V only | T2V, I2V, T2I2V, V2A |

| Distilled Option | Not yet | Yes (11s for 12s video) |

Quality Comparison

| Quality Metric | 5.0 Lite I2V | 4.0 Standard | 4.0 Distilled |

|---|---|---|---|

| Motion smoothness | Excellent | Excellent | Very Good |

| Detail retention | Excellent | Excellent | Good |

| Prompt adherence | Excellent | Very Good | Good |

| Temporal consistency | Excellent | Excellent | Good |

| Color accuracy | Excellent | Excellent | Very Good |

Use Case Recommendations

| Use Case | Recommended Model | Reason |

|---|---|---|

| Quick iteration | 4.0 Distilled | 11-second generation |

| Maximum quality I2V | 5.0 Lite | Latest architecture |

| Complete pipeline | 4.0 Standard | All generation modes |

| Limited hardware | 5.0 Lite | 12GB minimum |

| Commercial production | Either | Apache 2.0 license |

| Custom development | Either | Simple architecture |

Migration Considerations

Want to skip the complexity? Apatero gives you professional AI results instantly with no technical setup required.

Users of earlier Kandinsky versions should note that 5.0 Lite uses different component models including the Qwen 2.5VL text encoder and HunyuanVideo VAE. Existing prompts may require adjustment to achieve similar results with the new architecture.

The latest components generally provide better prompt understanding and video quality, but established workflows may need refinement during migration.

ComfyUI Integration Status and Workflows

The ComfyUI community has expressed strong interest in Kandinsky integration through Issue #10134, though official node support remains in development.

Current Integration Options

While waiting for official ComfyUI nodes, several approaches enable Kandinsky use within ComfyUI-adjacent workflows.

External script integration allows calling Kandinsky from ComfyUI workflows through custom nodes that execute Python scripts. This approach works but lacks the visual node-based configuration of native integration.

Hybrid workflows generate elements in Kandinsky externally then import results into ComfyUI for post-processing, composition, and combination with other generated content.

Expected Official Integration

Based on community request patterns and developer responses, official ComfyUI nodes for Kandinsky will likely include model loader nodes for each variant, text and image input handling, generation parameter configuration, and video output and format conversion.

The simple Kandinsky architecture should translate well to ComfyUI's node-based approach with straightforward connections between components.

Workflow Preparation

While awaiting official support, prepare workflows by establishing post-processing pipelines in ComfyUI that accept video input. This includes upscaling with nodes like SeedVR2, frame interpolation, color grading, and composition with other elements.

When official Kandinsky nodes arrive, these post-processing workflows will immediately enhance Kandinsky output without additional development.

Integration Timeline Expectations

Community-requested ComfyUI integrations typically arrive within weeks to months of significant interest. Given the active Issue #10134 discussion and Kandinsky's Apache 2.0 license encouraging community development, expect integration options to expand throughout late 2025 and early 2026.

For users needing immediate access to Kandinsky capabilities within production workflows, Apatero.com continues evaluating integration timelines and may provide early access as developments progress.

Professional Production Workflows

Moving beyond experimentation requires optimized workflows balancing quality, speed, and reliability for professional video production.

Rapid Concept Development

Use Kandinsky 4.0 distilled for initial concept exploration. Generate multiple variations in minutes rather than hours to quickly identify promising directions.

| Phase | Model | Settings | Purpose |

|---|---|---|---|

| Concepts | 4.0 Distilled | 480p, 15 steps | Fast variation testing |

| Refinement | 5.0 Lite or 4.0 | 720p, 25 steps | Quality verification |

| Final | 5.0 Lite or 4.0 | 1080p, 40 steps | Production output |

Client Presentation Workflow

Generate quick preview versions with the distilled model for client review. Upon approval, regenerate with full models at maximum quality for delivery.

This approach minimizes wasted computation on concepts that won't proceed to final production while ensuring delivered content meets professional standards.

Batch Production Pipeline

For projects requiring multiple videos with consistent style, establish generation templates with locked parameters. Queue all prompts and generate overnight to maximize hardware use.

Post-process the entire batch through consistent color grading and formatting pipelines to ensure visual coherence across deliverables.

Quality Control Checkpoints

Review generated video at 50% speed to identify motion artifacts invisible at normal playback. Check temporal consistency by examining specific frames across the duration. Verify prompt adherence by comparing intended versus actual scene elements.

Establish rejection criteria and regeneration protocols before beginning batch production to maintain consistent quality standards.

Multi-Model Combination Strategies

Combine Kandinsky strengths with other models for comprehensive projects. Use Kandinsky I2V to animate characters with its efficient architecture, then composite with background video from other sources. Add audio through Kandinsky V2A or dedicated audio models.

This modular approach uses each model's strengths while minimizing weaknesses.

Earn Up To $1,250+/Month Creating Content

Join our exclusive creator affiliate program. Get paid per viral video based on performance. Create content in your style with full creative freedom.

Kandinsky Versus Alternative Video Models

Understanding how Kandinsky compares to other video generation options helps identify optimal model selection for different projects.

Comparison with Leading Alternatives

| Factor | Kandinsky | Wan2.2 | HunyuanVideo | Mochi 1 |

|---|---|---|---|---|

| License | Apache 2.0 | Restrictive | Restrictive | Open |

| Min VRAM | 12GB | 4GB (GGUF) | 12GB | 8GB |

| I2V Quality | Excellent | Excellent | Very Good | Good |

| T2V Quality | Very Good | Very Good | Excellent | Good |

| Distilled Option | Yes (11s) | No | No | No |

| Architecture Simplicity | Excellent | Moderate | Complex | Moderate |

Kandinsky Advantages

The Apache 2.0 license distinguishes Kandinsky for commercial applications where licensing restrictions create legal complexity. The simple architecture enables customization impossible with more complex alternatives.

The distilled 4.0 variant provides iteration speed unmatched by any alternative, enabling workflow approaches impossible with slower generation times.

Kandinsky Limitations

Community adoption trails behind Wan2.2 and HunyuanVideo, meaning fewer tutorials, shared workflows, and community support resources. ComfyUI integration lags behind alternatives with established custom nodes.

VRAM requirements exceed GGUF-quantized Wan2.2, limiting accessibility for users with 4-8GB GPUs.

Selection Guidance

Choose Kandinsky when Apache 2.0 licensing matters for commercial use, when rapid iteration through distilled models benefits your workflow, when architectural simplicity enables necessary customization, or when 12GB VRAM availability meets your hardware constraints.

Choose alternatives when minimum hardware requirements must be below 12GB, when extensive community resources and tutorials matter, when native ComfyUI integration is essential, or when established workflows already use other models.

For comprehensive comparison of video generation approaches, see our text2video versus image2video versus video2video guide.

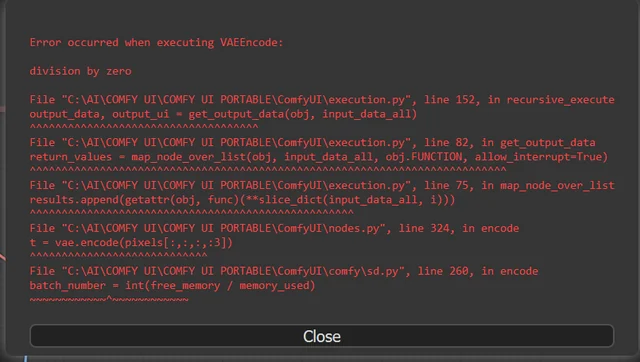

Troubleshooting Common Issues

Kandinsky installation and operation occasionally encounters issues with straightforward solutions.

Memory Errors During Generation

Out of memory errors typically indicate insufficient VRAM for selected resolution and settings. Reduce resolution from 1080p to 720p or 480p. Decrease step count. Enable aggressive offloading. Close other applications using GPU memory.

If errors persist at minimum settings, your GPU may not meet 12GB minimum requirements for 5.0 Lite.

Slow Generation Performance

Unexpectedly slow generation often indicates CPU fallback instead of GPU acceleration. Verify CUDA availability and correct PyTorch installation. Ensure correct attention mechanism for your hardware.

Generation times should be seconds to minutes depending on settings. Times extending to tens of minutes or hours suggest configuration problems.

Quality Issues

Poor output quality with appropriate prompts may indicate incorrect model weights, improper configuration, or incompatible component versions. Verify model files match expected checksums. Ensure text encoder, VAE, and generation model versions are compatible.

Attention Mechanism Failures

Flash Attention 2 compilation errors are common on systems without appropriate compilers or CUDA toolkit versions. Fall back to Sage Attention or SDPA rather than struggling with Flash Attention compilation issues.

Model Loading Failures

Loading errors typically indicate incorrect file paths or corrupted downloads. Verify model files are complete and correctly placed. Re-download any files with unexpected sizes or checksum failures.

For general ComfyUI troubleshooting applicable to Kandinsky workflows, see our red box troubleshooting guide.

Future Development and Roadmap

Understanding Kandinsky development direction helps with planning and investment decisions.

Expected Near-Term Developments

Community interest in ComfyUI integration will likely drive official node development. The simple Kandinsky architecture translates naturally to node-based workflows.

Quantized variants similar to GGUF for other models would reduce VRAM requirements below the current 12GB minimum, expanding accessibility significantly.

Potential Architecture Improvements

The Qwen 2.5VL and HunyuanVideo VAE components in 5.0 Lite suggest continued integration of best-in-class components from the broader ecosystem. Future versions may incorporate additional improvements as they become available.

Longer generation durations beyond the current 5-second and 12-second limits would address a common limitation across current video models.

Community Development Opportunities

The Apache 2.0 license and simple architecture explicitly invite community contributions. Expect specialized variants, custom training, and workflow integrations from community developers.

This open development model has historically produced rapid innovation as the community explores applications the original developers didn't anticipate.

Commercial Integration Trends

Platforms like Apatero.com continue evaluating open-source video models for integration. Kandinsky's licensing and architecture make it particularly suitable for commercial platform deployment.

As integration options mature, expect broader access to Kandinsky capabilities through simplified interfaces that abstract technical complexity.

Frequently Asked Questions

What GPU do I need for Kandinsky 5.0 Lite I2V?

Kandinsky 5.0 Lite I2V requires a minimum of 12GB GPU VRAM with 24GB system RAM for full pipeline operation with offloading. RTX 3060 12GB, RTX 4070, and RTX 3090 all meet or exceed these requirements. Lower VRAM GPUs cannot currently run 5.0 Lite even with optimization.

How fast is the Kandinsky 4.0 distilled model?

The accelerated distilled Kandinsky 4.0 generates 12-second videos at 480p resolution in just 11 seconds on a single GPU. This represents approximately 15-30x speed improvement over standard models, enabling rapid iteration impossible with typical video generation times measured in minutes.

Can I use Kandinsky commercially?

Yes, Kandinsky uses the Apache 2.0 license which explicitly permits commercial use, modification, distribution, and private use. This makes it one of the most permissive open-source video models available for business and freelance applications without licensing fees or attribution requirements in final products.

What's the difference between Kandinsky 5.0 Lite and 4.0?

Kandinsky 5.0 Lite I2V focuses specifically on efficient image-to-video with modern components including Qwen 2.5VL text encoding and HunyuanVideo VAE, requiring only 12GB VRAM. Kandinsky 4.0 provides a complete pipeline covering T2V, I2V, T2I2V, and V2A with higher requirements but more generation modes including an ultra-fast distilled option.

Does Kandinsky work with ComfyUI?

Official ComfyUI integration is currently in development with community interest expressed through Issue #10134. Current options include external script integration and hybrid workflows. Native node support should arrive as community developers create custom nodes using Kandinsky's simple architecture.

How does Kandinsky quality compare to Wan2.2?

Kandinsky and Wan2.2 produce comparable quality for most use cases. Kandinsky advantages include Apache 2.0 licensing and ultra-fast distilled generation. Wan2.2 advantages include lower VRAM requirements through GGUF quantization and established ComfyUI integration. Choose based on licensing needs, hardware constraints, and workflow requirements.

What attention mechanisms does Kandinsky support?

Kandinsky 5.0 Lite supports Flash Attention 2 for NVIDIA Ampere and newer GPUs, Sage Attention for broad compatibility with excellent performance, and SDPA through PyTorch's native implementation for universal compatibility. Flash Attention 2 provides 30-40% speed improvements on compatible hardware.

Can Kandinsky generate audio for videos?

Kandinsky 4.0 includes V2A video-to-audio generation that adds appropriate audio to generated videos. Quality suits draft timing reference and casual content. Professional productions requiring high-quality audio should use this as a starting point for dedicated audio production.

Conclusion and Recommendations

Kandinsky represents a compelling option in the 2025 video generation space through its unique combination of Apache 2.0 licensing, efficient hardware requirements, and remarkably fast distilled generation options.

Who Should Use Kandinsky

Commercial creators benefit most from the permissive licensing that avoids legal complexity. Rapid iteration workflows transform with 11-second generation times. Developers and researchers appreciate the simple architecture enabling customization. Users with 12GB GPUs find an efficient model that maximizes their hardware capabilities.

Recommended Starting Configuration

Begin with Kandinsky 5.0 Lite I2V if your primary use case is animating existing images. The modern architecture with Qwen 2.5VL encoding and HunyuanVideo VAE provides excellent results with 12GB minimum requirements.

For rapid experimentation or complete pipeline needs, install Kandinsky 4.0 with the distilled variant. The speed enables creative exploration impossible with slower alternatives.

Integration with Existing Workflows

Prepare post-processing workflows in ComfyUI now to enhance Kandinsky output when official integration arrives. Establish upscaling, frame interpolation, and composition pipelines that accept video input for immediate productivity when nodes become available.

Hardware Investment Guidance

If considering GPU upgrades for video generation, target 12GB minimum for Kandinsky 5.0 Lite or 16GB and above for full 4.0 pipeline access. The RTX 4070 12GB and RTX 3090 24GB represent excellent value propositions for Kandinsky workflows.

Production Readiness Assessment

Kandinsky is production-ready for teams comfortable with current installation procedures and script-based workflows. Organizations requiring native ComfyUI integration should wait for official node development or explore alternative models with established integration.

Future Outlook

Kandinsky development shows consistent progress with the November 2025 release of 5.0 Lite I2V demonstrating continued investment. The combination of permissive licensing, efficient requirements, and fast iteration positions Kandinsky well for expanded adoption as video generation workflows mature throughout 2026.

For creators serious about video generation with commercial applications, Kandinsky deserves evaluation alongside more widely discussed alternatives. The licensing and speed advantages may outweigh community size and integration maturity limitations depending on specific workflow requirements.

Visit Apatero.com for continued updates on Kandinsky integration developments and access to improved video generation workflows as the ecosystem evolves. The platform monitors open-source video models including Kandinsky for integration opportunities that benefit professional creators requiring reliable, licensed generation capabilities.

Ready to Create Your AI Influencer?

Join 115 students mastering ComfyUI and AI influencer marketing in our complete 51-lesson course.

Related Articles

10 Most Common ComfyUI Beginner Mistakes and How to Fix Them in 2025

Avoid the top 10 ComfyUI beginner pitfalls that frustrate new users. Complete troubleshooting guide with solutions for VRAM errors, model loading...

25 ComfyUI Tips and Tricks That Pro Users Don't Want You to Know in 2025

Discover 25 advanced ComfyUI tips, workflow optimization techniques, and pro-level tricks that expert users use.

360 Anime Spin with Anisora v3.2: Complete Character Rotation Guide ComfyUI 2025

Master 360-degree anime character rotation with Anisora v3.2 in ComfyUI. Learn camera orbit workflows, multi-view consistency, and professional...